High Availability Cluster 4.x: Difference between revisions

| Line 149: | Line 149: | ||

[[File:Idrac-asr.png|thumb]] | [[File:Idrac-asr.png|thumb]] | ||

If openmanage is installed, you need to disable watchdog management from openmanage | |||

edit : | |||

/opt/dell/srvadmin/etc/srvadmin-isvc/ini/dcwddy64.ini | |||

and comment | |||

<pre> | |||

;[HWC Configuration] | |||

;watchDogObj.settings=0 | |||

;watchDogObj.expiryTime=20 | |||

</pre> | |||

and reboot server | |||

After restart, check that watchdog timer is 10s, and not overrided by openmanage | |||

<pre> | |||

idracadm getsysinfo -w | |||

Watchdog Information: | |||

Recovery Action = Power Cycle | |||

Present countdown value = 9 seconds | |||

Initial countdown value = 10 seconds | |||

</pre> | |||

=== Failed watchdog-mux or Multiple Watchdogs === | === Failed watchdog-mux or Multiple Watchdogs === | ||

Revision as of 05:23, 4 December 2015

Introduction

Proxmox VE High Availability Cluster (Proxmox VE HA Cluster) enables the definition of high available virtual machines. In simple words, if a virtual machine (VM) is configured as HA and the physical host fails, the VM is automatically restarted on one of the remaining Proxmox VE Cluster nodes.

The Proxmox VE HA Cluster is based on the Proxmox VE HA Manager (pve-ha-manager) - using watchdog fencing. Major benefit of Linux softdog or hardware watchdog is zero configuration - it just works out of the box.

In order to learn more about functionality of the new Proxmox VE HA manager, install the HA simulator.

Update to the latest version

Before you start, make sure you have installed the latest packages, just run the following on all nodes:

apt-get update && apt-get dist-upgrade

System requirements

If you run HA, high end server hardware with no single point of failure is required. This includes redundant disks, redundant power supply, UPS systems, and network bonding.

- Proxmox VE 4.0 comes with Self-Fencing with hardware watchdog or Software watchdog.

- Fully configured Proxmox_VE_4.x_Cluster (version 4.0 and later), with at least 3 nodes (maximum supported configuration: currently 32 nodes per cluster).

- Shared storage (SAN, NAS/NFS, Ceph, DRBD9, ... for virtual disk images)

- Reliable, redundant network, suitable configured which supports multicast

- An extra network for cluster communication, one network for VM traffic and one network for storage traffic.

It's essential that you use redundant network connections for the cluster communication (bonding). If not, a simple switch reboot (or power loss on the switch) can fence all cluster nodes if it takes longer than 60 sec.

HA Configuration

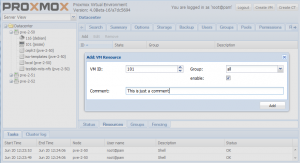

Adding and managing VM´s or containers for HA can be done via GUI or CLI (`ha-manager add <VMID>`).

Important: note that before enabling HA for a service you should test it thoughtfully. See if migration works, look that NO local resources are used by it. Secure that it may run on all nodes defined by its group and even better on all cluster nodes.

Fencing

Proxmox VE Cluster 4.0 or greater comes with watchdog fencing. This works out of the box, no configuration is required.

How Watchdog fencing works:

If the node has connection with the cluster and has quorum, the watchdog will be reset. If quorum is lost, the node is not able to reset the watchdog. This will trigger a reboot after 60 seconds.

If your hardware has a hardware watchdog, this one will be automatically detected and used. Otherwise, ha-manager just uses the Linux softdog. Therefore testing Proxmox VE HA inside a virtual environment is possible.

Permissions

From version 1.0-13 of the pve-ha-manager package, the HA stack is better integrated in the permission system of Proxmox VE.

- Creation, deletion and updating a resource or group needs the 'Sys.Console' privilege for the whole cluster (i.e. on the root path '/').

- The current status and the configured resources and groups may be read (but not written) with the 'Sys.Audit' privilege on the root path.

HA Groups

The Proxmox VE HA Cluster is using groups for mapping vm to node.

For example: If a "vm100" is in the group "ONE" and group "ONE" has members "pve1,pve2" and "vm100" is running on pve1.

When "pve1" is crashing. "vm100" will migrated to "pve2".

The Proxmox VE HA Groups has two option restricted and nofailback.

- restricted: VM's bound to the group may only run on cluster members which are also members of the group. If no members of the group are available, the service is placed in the stopped state.

- nofailback: Enabling this option for a group will prevent automated fail-back after a more-preferred node rejoins the cluster.

Enable a VM/CT for HA

On the CLI, you can use ha-manger to achieve this task.

IMPORTANT:

If you enable HA it's not possible to turnoff the VM inside the VM. Also, if it is disabled the VM will be stopped.

If you add a VM/CT, its instantly 'ha-managed'.

ha-manager add vm:100

To add a VM/CT on GUI.

Disable a VM/CT for HA

If you want to disable a ha-managed VM/CT (e.g. for shutdown) via CLI:

ha-manager disable vm:100

If you want to re-enable a ha-managed VM/CT:

ha-manager enable vm:100

HA Cluster Maintenance (node reboots)

If you need to reboot a node, e.g. because of a kernel update, you need to migrate all VM/CT to another node or disable them. By disabling them, all resources are stopped. All VM guests will get an ACPI shutdown request (if this won't work due to VM internal settings, they'll just get a 'stop').

The command will take some time for execution, monitor the "tasks" and the VM´s and CT´s on the GUI. As soon as the VM/CT are either stopped or migrated, you can reboot your node. As soon as the node is up again, continue with the next node and so on.

Note: When you gracefully shutdown a node, it services won't get migrated by the HA stack. You have to migrate them manually before you power off your node (for example for hardware maintenance).

HA Simulator

By using the HA simulator you can test and learn all functionalities of the Proxmox VE HA solutions.

The simulator allows you to watch and test the behaviour of a real-world 3 node cluster with 6 VM's.

You do not have to setup or configure a real cluster, the HA simulator runs out of the box on the current code base.

Install with apt:

apt-get install pve-ha-simulator

To start the simulator you must have a X11 redirection to your current system.

If you are on a Linux machine you can use:

ssh root@<IPofPVE4> -Y

On Windows it is working with mobaxterm.

After starting the simulator create a working directory:

mkdir working

To start the simulator type

pve-ha-simulator working/

Troubleshooting

Error recovery

If a service start fails we try to recover from it with our "restart" and "relocate" policy, see the man page of the ha-manager for more information.

If after all tries the service state could not be recovered it gets placed in an error state. In this state the service won't get touched by the HA stack anymore. To recover from this state you should follow these steps:

- bring the resource back into an safe and consistent state (e.g: killing its process)

- disable the ha resource to place it in an stopped state

- fix the error which led to this failures

- after you fixed all errors you may enable the service again

Note: when a Service fails to stop it also get's placed in the error state, you may follow the same steps to recover from it.

IPMI Watchdog

For IPMI Watchdogs you may have to set the action, else it may not do anything when it triggers.

For this purpose edit the /etc/modprobe.d/ipmi_watchdog.conf (simple create the file):

options ipmi_watchdog action=power_cycle

NOTE: reboot or reload ipmi_watchdog module to take the changes in effect.

Dell IDrac

For Dell IDrac, please desactivate the Automated System Recovery Agent in IDrac configuration.

If openmanage is installed, you need to disable watchdog management from openmanage

edit :

/opt/dell/srvadmin/etc/srvadmin-isvc/ini/dcwddy64.ini

and comment

;[HWC Configuration] ;watchDogObj.settings=0 ;watchDogObj.expiryTime=20

and reboot server

After restart, check that watchdog timer is 10s, and not overrided by openmanage

idracadm getsysinfo -w Watchdog Information: Recovery Action = Power Cycle Present countdown value = 9 seconds Initial countdown value = 10 seconds

Failed watchdog-mux or Multiple Watchdogs

Disable all BIOS watchdog functionality, those settings setup the watchdog in the expectancy that the OS resets it, that is not our desired use case here and may lead to problems - e.g.: reset of the node after a fixed amount of time.

Intel AMT (OS Health Watchdog) should be disabled and with it the mei and mei_me modules, as they may cause problems.

If you host has multiple watchdogs available, only allow the one you want to use for HA, i.e. blacklist the other modules from loading. Our watchdog multiplexer will use /dev/watchdog which maps to /dev/watchdog0.

Selecting a specific watchdog is not implemented, mainly for this quote from the linux-watchdog mailing list:

The watchdog device node <-> driver mapping is fragile and can change from one kernel version to the next or even across reboot, so users shouldn't assume it to be persistent.

Deleting Nodes From The Cluster

When deleting a node from a HA cluster you have to ensure the following:

- all HA services were relocate to another node! A graceful shutdown will NOT auto migrate them.

- Remove the node from all defined groups.

- Shutdown the node you want to remove, from now on this node MUST NOT come online in the same network again, without being reinstalled/cleared of all cluster traces.

- execute `pvecm delnode nodename` from an remaining node.

The HA stack now places the node in an 'gone' state, you still see it in the manager status. After an hour in this state it will be auto deleted. This ensures that if the node died ungracefully the services still will be fenced and migrated to another node.

Durations

Note that some HA actions may take their time, and don't happen instantly. This avoids out of control feedback loops, an ensures that the HA stack is all the time (where it's possible) in a safe and consistent state.

Container

Note that while containers may be put under HA, currently (PVE4Beta2) they don't support live migration. To migrate an container stop it, migrate it offline and start it again. If a node fails recovery works after the failed node was fenced, as long as you don't use local bound resources.

Video Tutorials

Testing

Before going into production it is highly recommended to do as many tests as possible. Then, do some more.

Useful command line tools

Here is a list of useful CLI tools:

- ha-manger - to manage the ha stack of the cluster

- pvecm - to manage the cluster-manager

- corosync* - to manipulate the corosync