Multicast notes: Difference between revisions

| Line 140: | Line 140: | ||

== Troubleshooting == | == Troubleshooting == | ||

All of this information has been moved to a Work-in-progress page: [[Troubleshooting multicast, quorum and cluster issues]]) | |||

Revision as of 15:27, 1 April 2016

Introduction

Multicast allows a single transmission to be delivered to multiple servers at the same time.

This is the basis for cluster communications in Proxmox VE 2.0 and higher, which uses corosync and cman, and would apply to any other solution which utilizes those clustering tools.

If multicast does not work in your network infrastructure, you should fix it so that it does. If all else fails, use unicast instead, but beware of the node count limitations with unicast.

IGMP snooping

IGMP snooping prevents flooding multicast traffic to all ports in the broadcast domain by only allowing traffic destined for ports which have solicited such traffic. IGMP snooping is a feature offered by most major switch manufacturers and is often enabled by default on switches. In order for a switch to properly snoop the IGMP traffic, there must be an IGMP querier on the network. If no querier is present, IGMP snooping will actively prevent ALL IGMP/Multicast traffic from being delivered!

If IGMP snooping is disabled, all multicast traffic will be delivered to all ports which may add unnecessary load, potentially allowing a denial of service attack.

IGMP querier

An IGMP querier is a multicast router that generates IGMP queries. IGMP snooping relies on these queries which are unconditionally forwarded to all ports, as the replies from the destination ports is what builds the internal tables in the switch to allow it to know which traffic to forward.

IGMP querier can be enabled on your router, switch, or even linux bridges.

Configuring IGMP/Multicast

Ensuring IGMP Snooping and Querier are enabled on your network (recommended)

Juniper - JunOS

Juniper EX switches, by default, enable IGMP snooping on all vlans as can be seen by this config snippet:

[edit protocols] user@switch# show igmp-snooping vlan all;

However, IGMP querier is not enabled by default. If you are using RVIs (Routed Virtual Interfaces) on your switch already, you can enabled IGMP v2 on the interface which enables the querier. However, most administrators do not use RVIs in all vlans on their switches and should be configured instead on the router. The below config setting is the same on Juniper EX switches using RVIs as it is on Juniper SRX service gateways/routers, and effectively enables IGMP querier on the specified interface/vlan. Note you must set this on all vlans which require multicast!:

set protocols igmp $iface version 2

Cisco

On Cisco switches, IGMP snooping is enabled by default. You do have to enable an IGMP snooping querier though:

ip igmp snooping querier

This will enable it for all vlans. You can verify that it is enabled:

show ip igmp snooping querier Vlan IP Address IGMP Version Port ------------------------------------------------------------- 1 172.16.34.4 v2 Switch 2 172.16.34.4 v2 Switch 3 172.16.34.4 v2 Switch

Brocade

Linux: Enabling Multicast querier on bridges

If your router or switch does not support enabling a multicast querier, and you are using a classic linux bridge (not Open vSwitch), then you can enable the multicast querier on the Linux bridge by adding this statement to your /etc/network/interfaces bridge configuration:

post-up ( echo 1 > /sys/devices/virtual/net/$IFACE/bridge/multicast_querier )

Disabling IGMP Snooping (not recommended)

Juniper - JunOS

set protocols igmp-snooping vlan all disable

Cisco Managed Switches

# conf t # no ip igmp snooping

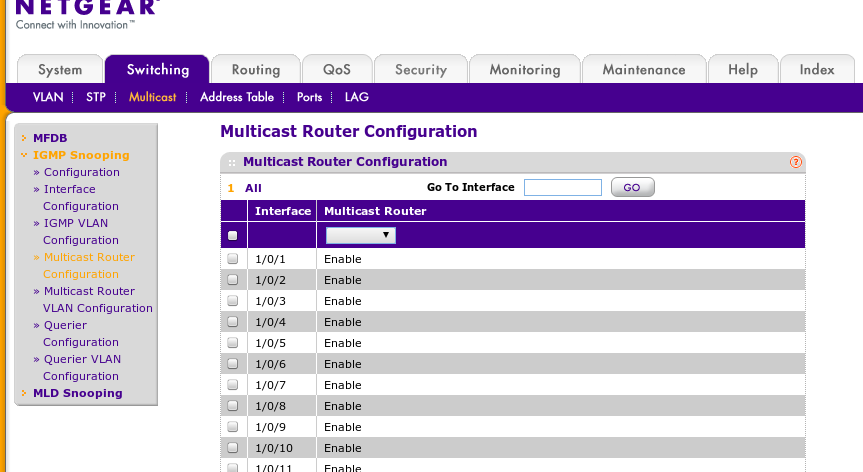

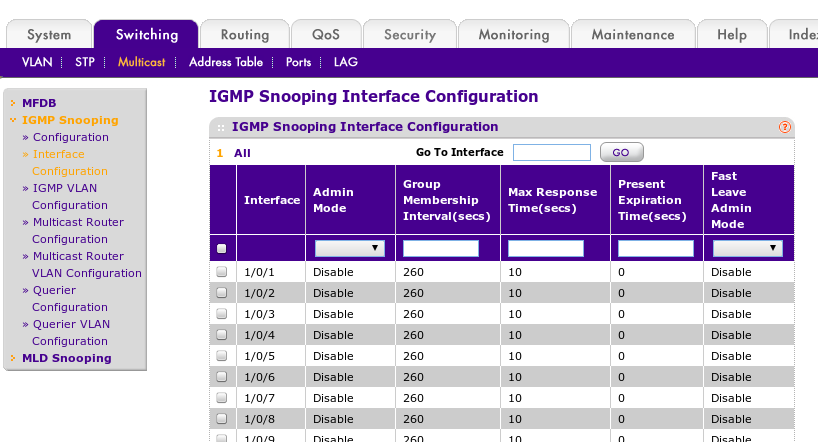

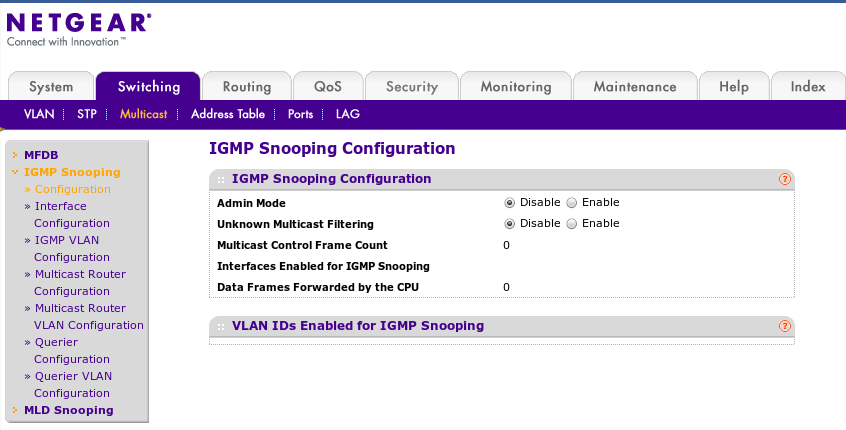

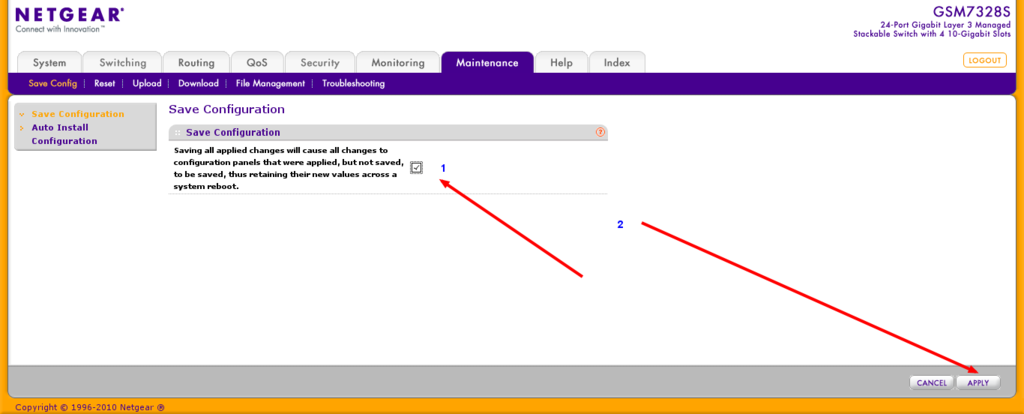

Netgear Managed Switches

the following are pics of setting to get multicast working on our netgear 7300 series switches. for more information see http://documentation.netgear.com/gs700at/enu/202-10360-01/GS700AT%20Series%20UG-06-18.html

Multicast with Infiniband

IP over Infiniband (IPoIB) supports Multicast but Multicast traffic is limited to 2043 Bytes when using connected mode even if you set a larger MTU on the IPoIB interface.

Corosync has a setting, netmtu, that defaults to 1500 making it compatible with connected mode Infiniband.

Changing netmtu

Changing the netmtu can increase throughput The following information is untested.

Edit the /etc/pve/cluster.conf file Add the section:

<totem netmtu="2043" />

<?xml version="1.0"?>

<cluster name="clustername" config_version="2">

<totem netmtu="2043" />

<cman keyfile="/var/lib/pve-cluster/corosync.authkey">

</cman>

<clusternodes>

<clusternode name="node1" votes="1" nodeid="1"/>

<clusternode name="node2" votes="1" nodeid="2"/>

<clusternode name="node3" votes="1" nodeid="3"/></clusternodes>

</cluster>

Testing multicast

Note: not all hosting companies allow multicast traffic.

First, check your cluster multicast address:

#pvecm status|grep "Multicast addresses" Multicast addresses: 239.192.221.35

Using omping

Install on all nodes

aptitude install omping

start omping on all nodes with the following command and check the output, e.g:

omping -m yourmulticastadress node1 node2 node3

Or not use -m which needs the multicast address

omping node1 node2 node3

- note to find the multicast address on proxmox 3.X run this:

pvecm status | grep Multicast

- note to find the multicast address on proxmox 4.X run this:

corosync-cmapctl -g totem.interface.0.mcastaddr

Troubleshooting

All of this information has been moved to a Work-in-progress page: Troubleshooting multicast, quorum and cluster issues)