Difference between revisions of "Storage: ZFS"

Bread-baker (talk | contribs) |

(Complete rewrite for the upcoming Proxmox VE 3.4 release) |

||

| Line 1: | Line 1: | ||

=Introduction= | =Introduction= | ||

| − | + | ZFS is a combined file system and logical volume manager designed by Sun Microsystems. Starting with Proxmox VE 3.4, the native Linux kernel port of the ZFS filesystem is introduced as optional file-system and also as an additional selection for the root file-system (by using Proxmox VE ISO installer proxmox-ve_3.3-0317e201-17-rc1.iso or higher). There is no need for manually compiling ZFS, all packages are included (for both kernel branches, 2.6.32 and 3.10). | |

| − | + | By using ZFS, its possible to achieve maximal enterprise features with low budget hardware but also high performance systems by leveraging SSD caching or even SSD only setups. ZFS can replace cost intense hardware raid cards by moderate CPU and memory load combined with easy management. | |

| − | + | ||

| − | + | In the first release, there are two ways to use ZFS on Proxmox VE: | |

| − | + | *as an local directory, supports all storage content types (instead of ext3 or ext4) | |

| + | *as zvol block-storage, currently supporting kvm images in raw format (new ZFS storage plugin) | ||

| + | **The advantage of zvol is the snapshot capability on fs-level (fast) | ||

| − | + | This articles describes how to use ZFS on Proxmox VE. | |

| + | ==General ZFS advantages== | ||

| + | *Easy configuration and management with Proxmox VE GUI and CLI. | ||

| + | *Reliable | ||

| + | *Protection against data corruption | ||

| + | *Data compression on file-system level | ||

| + | *Snapshots | ||

| + | *Copy-on-write clone | ||

| + | *Various raid levels: RAID0, RAID1, RAID10, RAIDZ-1, RAIDZ-2 and RAIDZ-3 | ||

| + | *Can use SSD for cache | ||

| + | *Self healing | ||

| + | *Continuous integrity checking | ||

| + | *Designed for high storage capacities | ||

| + | *Protection against data corruption | ||

| + | *Asynchrony replication over network | ||

| + | *Open Source | ||

| + | *Encryption | ||

| + | *... | ||

| − | = | + | =Hardware= |

| − | + | ZFS depends heavily on memory, so you need at least 4GB to start. In practice, use as much you can get for your hardware/budget. To prevent data corruption, the use of high quality ECC RAM is very recommended. | |

| − | + | If you use a dedicated cache and/or log disk, you should use a enterprise class SSD (e.g. Intel SSD DC S3700 Series). This can increase the overall performance quite significantly. | |

| − | + | =Installation as root file-system= | |

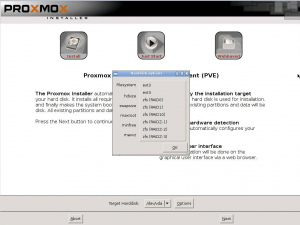

| + | [[Image:Screen-ISO-Install-ZFS.png|thumb]] | ||

| + | When you install with Proxmox-VE installer grater than 3.4. you can choose what FS you prefer. | ||

| − | + | =Administration= | |

| + | ==Create a ZPool== | ||

| + | To create a zfspool, at least one disk is needed. The ashift should have the same sector-size (2 power of ashift) or larger as the underlying disk. | ||

| + | zpool create -f -o ashift=12 <pool-name> <device> | ||

| + | To activate the compression | ||

| + | zfs set compression=lz4 <pool-name> | ||

| + | ====RAID-0==== | ||

| + | Minimum 1 Disk | ||

| + | zpool create -f -o ashift=12 <pool-name> <device1> <device2> | ||

| + | ====RAID-1==== | ||

| + | Minimum 2 Disks | ||

| + | zpool create -f -o ashift=12 <pool-name> mirror <device1> <device2> | ||

| + | ====RAID-10==== | ||

| + | Minimum 4 Disks | ||

| + | zpool create -f -o ashift=12 <pool-name> mirror <device1> <device2> mirror <device3> <device4> | ||

| + | ====RAIDZ-1==== | ||

| + | Minimum 3 Disks | ||

| + | zpool create -f -o ashift=12 <pool-name> raidz1 <device1> <device2> <device3> | ||

| + | ====RAIDZ-2==== | ||

| + | Minimum 4 Disks | ||

| + | zpool create -f -o ashift=12 <pool-name> raidz2 <device1> <device2> <device3> <device4> | ||

| + | ====Cache (L2ARC)==== | ||

| + | It is possible to use a dedicated cache drive partition to increase the performance (use SSD). | ||

| + | zpool create -f -o ashift=12 <pool-name> <device> cache <cache_device> | ||

| + | ====Log (ZIL)==== | ||

| + | It is possible to use a dedicated cache drive partition to increase the performance(SSD). | ||

| + | zpool create -f -o ashift=12 <pool-name> <device> log <log_device> | ||

| + | ====Cache and Log on one Disk==== | ||

| + | It is possible to create ZIL and L2ARC on one SSD. First partition the SSD in 2 partition with parted or gdisk (important: use GPT partition table). | ||

| + | zpool create -f -o ashift=12 <pool-name> <device> cache <device1.part1> log <device1.part2> | ||

| + | ==Changing a failed Device== | ||

| + | zpool replace -f <pool-name> <old device> <new-device> | ||

| + | ==Using ZFS Storage Plugin via Proxmox VE GUI== | ||

| + | If the zpool is created, you can use it on Proxmox VE GUI and CLI. | ||

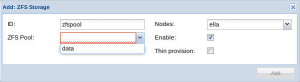

| + | ===Adding a ZFS storage via GUI=== | ||

| + | [[Image:Screen-Add-ZFS1.png|thumb]] [[Image:Screen-Add-ZFS2.png|thumb]] | ||

| + | Go to Datacenter/Storage and use the ZFSStorage plugin to add your zpool (select ZFS). | ||

| − | * | + | *ID is for identification of the Storage |

| − | + | *the checkbox ZFS Pool shows all existing pools (use CLI to create more) | |

| + | *Thin provisioning: allocate not all space immediately by creating virtual disks | ||

| − | + | ===Adding a ZFS storage via CLI=== | |

| − | + | To create it by CLI use | |

| + | pvesm add <storage-name> -type zfspool -pool <pool-name> | ||

| − | + | ==Limit ZFS memory usage== | |

| − | + | It is good to use max 50-70 percent of the system memory for ZFS arc to prevent performance shortage of the host. ZFS uses all available memory, including swap. | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | == | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | Use your preferred editor to change the config in /etc/modprobe.d/zfs.conf and insert: | |

| + | options zfs zfs_arc_max=4299967296 | ||

| + | This example setting limits the usage to 4GB. | ||

| − | == | + | =Misc= |

| − | see thread on | + | ==QEMU tuning== |

| + | see thread on proxmox forum, per user Nemesiz: | ||

*pool: | *pool: | ||

zfs set primarycache=all tank | zfs set primarycache=all tank | ||

| Line 100: | Line 107: | ||

</pre> | </pre> | ||

| − | == Install on a high performance system== | + | ==Example configurations for running Proxmox VE with ZFS== |

| − | + | ===Install on a high performance system=== | |

| − | As of 2013 high performance servers have 16-64 cores, 256GB-1TB RAM and potentially many 2.5" disks and/or a PCIe based SSD with half a million IOPS. High performance systems benefit from a number of custom settings, for example enabling compression typically improves performance. | + | As of 2013 and later, high performance servers have 16-64 cores, 256GB-1TB RAM and potentially many 2.5" disks and/or a PCIe based SSD with half a million IOPS. High performance systems benefit from a number of custom settings, for example enabling compression typically improves performance. |

* If you have a good number of disks keep organized by using aliases. Edit /etc/zfs/vdev_id.conf to prepare aliases for disk devices found in /dev/disk/by-id/ : | * If you have a good number of disks keep organized by using aliases. Edit /etc/zfs/vdev_id.conf to prepare aliases for disk devices found in /dev/disk/by-id/ : | ||

| Line 113: | Line 120: | ||

alias b2 scsi-36848f690e856b10018cdf47761587cec | alias b2 scsi-36848f690e856b10018cdf47761587cec | ||

| − | Use flash for caching/logs. If you have only one SSD, use | + | Use flash for caching/logs. If you have only one SSD, use parted of gdisk to create a small partition for the ZIL (ZFS intent log) and a larger one for the L2ARC (ZFS read cache on disk). Make sure that the ZIL is on the first partition. In our case we have a Express Flash PCIe SSD with 175GB capacity and setup a ZIL with 25GB and a L2ARC cache partition of 150GB. |

| − | + | *edit /etc/modprobe.d/zfs.conf to apply several tuning options for high performance servers: | |

| − | * edit /etc/modprobe.d/zfs.conf to apply several tuning options for high performance servers: | ||

# ZFS tuning for a proxmox machine that reserves 64GB for ZFS | # ZFS tuning for a proxmox machine that reserves 64GB for ZFS | ||

| Line 126: | Line 132: | ||

options zfs l2arc_noprefetch=0 | options zfs l2arc_noprefetch=0 | ||

| − | * create a zpool of striped mirrors (equivalent to RAID10) with log device and cache and always enable compression: | + | *create a zpool of striped mirrors (equivalent to RAID10) with log device and cache and always enable compression: |

zpool create -o compression=on -f tank mirror a0 b0 mirror a1 b1 mirror a2 b2 log /dev/rssda1 cache /dev/rssda2 | zpool create -o compression=on -f tank mirror a0 b0 mirror a1 b1 mirror a2 b2 log /dev/rssda1 cache /dev/rssda2 | ||

| − | * check the status of the newly created pool: | + | *check the status of the newly created pool: |

<pre> | <pre> | ||

| Line 160: | Line 166: | ||

Using PVE 2.3 on a 2013 high performance system with ZFS you can install Windows Server 2012 Datacenter Edition with GUI in just under 4 minutes. | Using PVE 2.3 on a 2013 high performance system with ZFS you can install Windows Server 2012 Datacenter Edition with GUI in just under 4 minutes. | ||

| − | = | + | =Troubleshooting and known issues= |

| − | * | + | ==Grub boot ZFS problem== |

| − | * | + | *Symptoms: stuck at boot with an blinking prompt. |

| − | + | *Reason: If you ZFS raid it could happen that your mainboard does not initial all your disks correctly and Grub will wait for all RAID disk members - and fails. It can happen with more than 2 disks in ZFS RAID configuration - we saw this on some boards with ZFS RAID-0/RAID-10 | |

| + | ==ZFS mounting workaround== | ||

| + | The default ZFS mount -a script runs too late in the boot process for most system scripts. The following helps to mount ZFS correctly. This is only necessary if you do not use ZFS as root file-system and if you use ZFS as an additional directory storage. | ||

| − | + | 2014-01-22: the info below came from this excellent wiki page: http://wiki.complete.org/ConvertingToZFS | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | 2014-01-22 | ||

*Edit /etc/default/zfs and set ZFS_MOUNT='yes' | *Edit /etc/default/zfs and set ZFS_MOUNT='yes' | ||

| Line 185: | Line 182: | ||

<pre> | <pre> | ||

# | # | ||

| − | # All local | + | # All local file-systems are mounted (done during boot phase) |

# | # | ||

$local_fs +mountall +mountall-bootclean +mountoverflowtmp +umountfs | $local_fs +mountall +mountall-bootclean +mountoverflowtmp +umountfs | ||

| Line 197: | Line 194: | ||

</pre> | </pre> | ||

''note remove the Required-Start and -Stop entries.'' | ''note remove the Required-Start and -Stop entries.'' | ||

| − | |||

*Activating init.d changes Then run: | *Activating init.d changes Then run: | ||

| Line 204: | Line 200: | ||

</pre> | </pre> | ||

| − | I had an issue with pve storage on | + | I had an issue with pve storage on ZFS, before pve would start before ZFS and create directories at the ZFS mount point. To fix that start single user mode and remove the directories [ make sure they are empty.... ]. |

also see https://github.com/zfsonlinux/pkg-zfs/issues/101 | also see https://github.com/zfsonlinux/pkg-zfs/issues/101 | ||

| + | |||

| + | =Glossary= | ||

| + | *ZPool is the logical unit of the underlying disks, what zfs use. | ||

| + | *ZVol is an emulated Block Device provided by ZFS | ||

| + | *ZIL is ZFS Intent Log | ||

| + | *ARC is Adaptive Replacement Cache and located in Ram | ||

| + | *L2ARC is Layer2 Adaptive Replacement Cache and should be on an fast device (like SSD). | ||

| + | |||

| + | =Further readings about ZFS= | ||

| + | *http://zfsonlinux.org/faq.html | ||

| + | *http://wiki.complete.org/ConvertingToZFS | ||

| + | *http://hub.opensolaris.org/bin/download/Community+Group+zfs/docs/zfslast.pdf | ||

| + | |||

| + | and this has some very important information to know before implementing zfs on a production system. | ||

| + | *http://www.solarisinternals.com/wiki/index.php/ZFS_Best_Practices_Guide | ||

| + | |||

| + | Very well written manual pages | ||

| + | man zfs | ||

| + | man zpool | ||

| + | |||

| + | [[Category:HOWTO]] [[Category:Installation]] [[Category:Technology]] | ||

Revision as of 16:43, 30 January 2015

Introduction

ZFS is a combined file system and logical volume manager designed by Sun Microsystems. Starting with Proxmox VE 3.4, the native Linux kernel port of the ZFS filesystem is introduced as optional file-system and also as an additional selection for the root file-system (by using Proxmox VE ISO installer proxmox-ve_3.3-0317e201-17-rc1.iso or higher). There is no need for manually compiling ZFS, all packages are included (for both kernel branches, 2.6.32 and 3.10).

By using ZFS, its possible to achieve maximal enterprise features with low budget hardware but also high performance systems by leveraging SSD caching or even SSD only setups. ZFS can replace cost intense hardware raid cards by moderate CPU and memory load combined with easy management.

In the first release, there are two ways to use ZFS on Proxmox VE:

- as an local directory, supports all storage content types (instead of ext3 or ext4)

- as zvol block-storage, currently supporting kvm images in raw format (new ZFS storage plugin)

- The advantage of zvol is the snapshot capability on fs-level (fast)

This articles describes how to use ZFS on Proxmox VE.

General ZFS advantages

- Easy configuration and management with Proxmox VE GUI and CLI.

- Reliable

- Protection against data corruption

- Data compression on file-system level

- Snapshots

- Copy-on-write clone

- Various raid levels: RAID0, RAID1, RAID10, RAIDZ-1, RAIDZ-2 and RAIDZ-3

- Can use SSD for cache

- Self healing

- Continuous integrity checking

- Designed for high storage capacities

- Protection against data corruption

- Asynchrony replication over network

- Open Source

- Encryption

- ...

Hardware

ZFS depends heavily on memory, so you need at least 4GB to start. In practice, use as much you can get for your hardware/budget. To prevent data corruption, the use of high quality ECC RAM is very recommended.

If you use a dedicated cache and/or log disk, you should use a enterprise class SSD (e.g. Intel SSD DC S3700 Series). This can increase the overall performance quite significantly.

Installation as root file-system

When you install with Proxmox-VE installer grater than 3.4. you can choose what FS you prefer.

Administration

Create a ZPool

To create a zfspool, at least one disk is needed. The ashift should have the same sector-size (2 power of ashift) or larger as the underlying disk.

zpool create -f -o ashift=12 <pool-name> <device>

To activate the compression

zfs set compression=lz4 <pool-name>

RAID-0

Minimum 1 Disk

zpool create -f -o ashift=12 <pool-name> <device1> <device2>

RAID-1

Minimum 2 Disks

zpool create -f -o ashift=12 <pool-name> mirror <device1> <device2>

RAID-10

Minimum 4 Disks

zpool create -f -o ashift=12 <pool-name> mirror <device1> <device2> mirror <device3> <device4>

RAIDZ-1

Minimum 3 Disks

zpool create -f -o ashift=12 <pool-name> raidz1 <device1> <device2> <device3>

RAIDZ-2

Minimum 4 Disks

zpool create -f -o ashift=12 <pool-name> raidz2 <device1> <device2> <device3> <device4>

Cache (L2ARC)

It is possible to use a dedicated cache drive partition to increase the performance (use SSD).

zpool create -f -o ashift=12 <pool-name> <device> cache <cache_device>

Log (ZIL)

It is possible to use a dedicated cache drive partition to increase the performance(SSD).

zpool create -f -o ashift=12 <pool-name> <device> log <log_device>

Cache and Log on one Disk

It is possible to create ZIL and L2ARC on one SSD. First partition the SSD in 2 partition with parted or gdisk (important: use GPT partition table).

zpool create -f -o ashift=12 <pool-name> <device> cache <device1.part1> log <device1.part2>

Changing a failed Device

zpool replace -f <pool-name> <old device> <new-device>

Using ZFS Storage Plugin via Proxmox VE GUI

If the zpool is created, you can use it on Proxmox VE GUI and CLI.

Adding a ZFS storage via GUI

Go to Datacenter/Storage and use the ZFSStorage plugin to add your zpool (select ZFS).

- ID is for identification of the Storage

- the checkbox ZFS Pool shows all existing pools (use CLI to create more)

- Thin provisioning: allocate not all space immediately by creating virtual disks

Adding a ZFS storage via CLI

To create it by CLI use

pvesm add <storage-name> -type zfspool -pool <pool-name>

Limit ZFS memory usage

It is good to use max 50-70 percent of the system memory for ZFS arc to prevent performance shortage of the host. ZFS uses all available memory, including swap.

Use your preferred editor to change the config in /etc/modprobe.d/zfs.conf and insert:

options zfs zfs_arc_max=4299967296

This example setting limits the usage to 4GB.

Misc

QEMU tuning

see thread on proxmox forum, per user Nemesiz:

- pool:

zfs set primarycache=all tank

- kvm config:

- change cache to Write Back

- You can do it using web GUI or manually. Example:

ide0: data_zfs:100/vm-100-disk-1.raw,cache=writeback

if not set this happened:

qm start 4016 kvm: -drive file=/data/pve-storage/images/4016/vm-4016-disk-1.raw,if=none,id=drive-virtio1,aio=native,cache=none: could not open disk image /data/pve-storage/images/4016/vm-4016-disk-1.raw: Invalid argument

Example configurations for running Proxmox VE with ZFS

Install on a high performance system

As of 2013 and later, high performance servers have 16-64 cores, 256GB-1TB RAM and potentially many 2.5" disks and/or a PCIe based SSD with half a million IOPS. High performance systems benefit from a number of custom settings, for example enabling compression typically improves performance.

- If you have a good number of disks keep organized by using aliases. Edit /etc/zfs/vdev_id.conf to prepare aliases for disk devices found in /dev/disk/by-id/ :

# run 'udevadm trigger' after updating this file alias a0 scsi-36848f690e856b10018cdf39854055206 alias b0 scsi-36848f690e856b10018cdf3ce573fdeb6 alias a1 scsi-36848f690e856b10018cdf40f5b277cbc alias b1 scsi-36848f690e856b10018cdf43a5db1b99b alias a2 scsi-36848f690e856b10018cdf4575f652ad0 alias b2 scsi-36848f690e856b10018cdf47761587cec

Use flash for caching/logs. If you have only one SSD, use parted of gdisk to create a small partition for the ZIL (ZFS intent log) and a larger one for the L2ARC (ZFS read cache on disk). Make sure that the ZIL is on the first partition. In our case we have a Express Flash PCIe SSD with 175GB capacity and setup a ZIL with 25GB and a L2ARC cache partition of 150GB.

- edit /etc/modprobe.d/zfs.conf to apply several tuning options for high performance servers:

# ZFS tuning for a proxmox machine that reserves 64GB for ZFS # # Don't let ZFS use less than 4GB and more than 64GB options zfs zfs_arc_min=4294967296 options zfs zfs_arc_max=68719476736 # # disabling prefetch is no longer required options zfs l2arc_noprefetch=0

- create a zpool of striped mirrors (equivalent to RAID10) with log device and cache and always enable compression:

zpool create -o compression=on -f tank mirror a0 b0 mirror a1 b1 mirror a2 b2 log /dev/rssda1 cache /dev/rssda2

- check the status of the newly created pool:

root@proxmox:/# zpool status

pool: tank

state: ONLINE

scan: none requested

config:

NAME STATE READ WRITE CKSUM

tank ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

a0 ONLINE 0 0 0

b0 ONLINE 0 0 0

mirror-1 ONLINE 0 0 0

a1 ONLINE 0 0 0

b1 ONLINE 0 0 0

mirror-2 ONLINE 0 0 0

a2 ONLINE 0 0 0

b2 ONLINE 0 0 0

logs

rssda1 ONLINE 0 0 0

cache

rssda2 ONLINE 0 0 0

errors: No known data errors

Using PVE 2.3 on a 2013 high performance system with ZFS you can install Windows Server 2012 Datacenter Edition with GUI in just under 4 minutes.

Troubleshooting and known issues

Grub boot ZFS problem

- Symptoms: stuck at boot with an blinking prompt.

- Reason: If you ZFS raid it could happen that your mainboard does not initial all your disks correctly and Grub will wait for all RAID disk members - and fails. It can happen with more than 2 disks in ZFS RAID configuration - we saw this on some boards with ZFS RAID-0/RAID-10

ZFS mounting workaround

The default ZFS mount -a script runs too late in the boot process for most system scripts. The following helps to mount ZFS correctly. This is only necessary if you do not use ZFS as root file-system and if you use ZFS as an additional directory storage.

2014-01-22: the info below came from this excellent wiki page: http://wiki.complete.org/ConvertingToZFS

- Edit /etc/default/zfs and set ZFS_MOUNT='yes'

- edit /etc/insserv.conf,

- and at the end of the $local_fs line,

- add zfs-mount (without a plus).

# # All local file-systems are mounted (done during boot phase) # $local_fs +mountall +mountall-bootclean +mountoverflowtmp +umountfs

edit /etc/init.d/zfs-mount and find three lines near the top, changing them like this:

# Required-Start: # Required-Stop: # Default-Start: S

note remove the Required-Start and -Stop entries.

- Activating init.d changes Then run:

insserv -v -d zfs-mount

I had an issue with pve storage on ZFS, before pve would start before ZFS and create directories at the ZFS mount point. To fix that start single user mode and remove the directories [ make sure they are empty.... ].

also see https://github.com/zfsonlinux/pkg-zfs/issues/101

Glossary

- ZPool is the logical unit of the underlying disks, what zfs use.

- ZVol is an emulated Block Device provided by ZFS

- ZIL is ZFS Intent Log

- ARC is Adaptive Replacement Cache and located in Ram

- L2ARC is Layer2 Adaptive Replacement Cache and should be on an fast device (like SSD).

Further readings about ZFS

- http://zfsonlinux.org/faq.html

- http://wiki.complete.org/ConvertingToZFS

- http://hub.opensolaris.org/bin/download/Community+Group+zfs/docs/zfslast.pdf

and this has some very important information to know before implementing zfs on a production system.

Very well written manual pages

man zfs man zpool