Two-Node High Availability Cluster: Difference between revisions

No edit summary |

|||

| Line 1: | Line 1: | ||

[[Category:HOWTO]] | [[Category:HOWTO]] | ||

{{Note|Article about Proxmox VE 2.0 beta}} | {{Note|Article about Proxmox VE 2.0 beta}} | ||

Revision as of 10:24, 19 January 2012

| Note: Article about Proxmox VE 2.0 beta |

Introduction

This article explores how to build a two-node cluster with HA enabled under Proxmox. HA is generally recommended to be deployed on at least three nodes to prevent strange behaviours and potentially lethal data incoherences (for further info look for "Quorum". Nevertheless, with some tweaking, it is also possible to successfully use Proxmox to run on a two-node cluster.

Although in the case of two-node clusters it is recommended to use a third, shared quorum disk partition, Proxmox allows to build the cluster without it. Let's see how.

System requirements

If you run HA, only high end server hardware with no single point of failure should be used. This includes redundant disks (Hardware Raid), redundant power supply, UPS systems, network bonding.

- Fully configured Proxmox_VE_2.0_Cluster, with 2 nodes.

- Shared storage (SAN for Virtual Disk Image Store for HA KVM). In this case, no external storage was used. Instead, a cheaper alternative (DRBD) was tested.

- Reliable network, suitable configured

- Fencing device(s) - reliable and TESTED!. We will use HP's iLO for this example.

What is DRBD used for?

For this testing configuration, two DRBD resources were created, one for VM images an another one for VMs users data. Thanks to DRBD (if properly configured), a mirror raid is created through the network (be aware that, although possible, using WANs would mean high latencies). As VMs and data is replicated synchronously in both nodes, if one of them fails, it will be possible to restart "dead" machines on the other node without data loss.

Configuring Fencing

Fencing is vital for Proxmox to manage a node loss and thus provide effective HA. Fencing is the mechanism used to prevent data incoherences between nodes in a cluster by ensuring that a node reported as "dead" is really down. If it is not, a reboot or power-off signal is sent to force it to go to a safe state and prevent multiple instances of the same virtual machine run concurrently on different nodes.

Many different methods can be used for fencing a node, so just a few changes would be needed to make this work under different scenarios.

First, login to the CLI in any of your properly configure cluster machines.

Then, create a copy of cluster.conf (append it .new):

cp /etc/pve/cluster.conf /etc/pve/cluster.conf.new

go and edit cluster.conf.new. In one of the first lines you will see this:

<cluster alias="hpiloclust" config_version="12" name="hpiloclust">

Be sure to increase the number "config_version" each time you plan to apply new configurations as this is the internal mechanism used by the cluster configuration tools to detect new changes.

To be able to create and manage a two-node cluster, edit the cman configuration part to include this:

<cman two_node="1" expected_votes="1"> </cman>

Now, add the available fencing devices to the config files by adding this lines (it is ok right after </clusternodes>):

<fencedevices>

<fencedevice agent="fence_ilo" hostname="nodeA.your.domain" login="hpilologin" name="fenceNodeA" passwd="hpilopword"/>

<fencedevice agent="fence_ilo" hostname="nodeB.your.domain" login="hpilologin" name="fenceNodeB" passwd="hpilologin"/>

</fencedevices>

If you prefer to use IPs instead of host names, substitute:

hostname="nodeA"

for

ipaddr="192.168.1.2"

or whatever IP belongs to the iLO interface on that node.

Next, you need to tell the cluster what fencing device is used to fence which host. Therefore, within the "<clusternode></clusternode>" tags, add the following accordingly to each node (do not care about the "method" name, choose whatever):

<clusternode name="nodeA.your.domain" nodeid="1" votes="1">

<fence>

<method name="1">

<device name="fenceNodeA" action="reboot"/>

</method>

</fence>

</clusternode>

<clusternode name="nodeB.your.domain" nodeid="2" votes="1">

<fence>

<method name="1">

<device name="fenceNodeB" action="reboot"/>

</method>

</fence>

</clusternode>

Choose the "action" you prefer. "reboot" will fence the node and reboot it to wait for a proper state (i.e. waiting for network to be operative again). Also "off" could be used but this will require human intervention to boot the node again.

So far, so good. The required fencing is now properly configured and must be tested prior to enter a production environment.

Applying changes

Once the cluster.conf.new is properly modified and saved, it is time to apply changes to propagate them across all cluster nodes. This is done through the GUI and connected to the machine where that file has been modified.

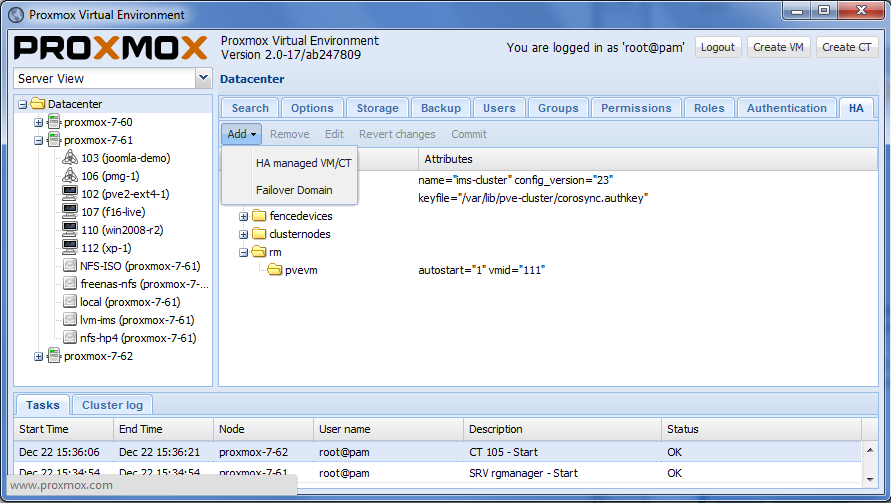

On the left of the screen select your cluster. Now, on the top right, select tab named "HA" (see next image).

Now, on the bottom of the screen changes to apply will appear. If everything is correct, click "commit" and acknowledge the operation. Now two scenarios are possible:

- Everything goes smoothly: no errors were detected, changes were applied and the new configuration was propagated across all cluster nodes. Now, the configuration tree should show the new elements (fencing devices) properly configured.

- Errors appeared: check your cluster.conf.new syntax and parameters of the fencing devices. An incorrect password/hostname/username will make tests fail and will prevent the new configuration to take effect. It is recommended to previously check that fencing works by using the fence_ilo (or whatever you use) command from the CLI.

Checking everything works

Create some virtual machines and/or containers in one of your nodes. Start them and enable HA

Now that everything is going smooth on your cluster, just power-off the node were machines are running (press power) or pull the network cables used for data and synchronization (keep that used for the fencing mechanism). From your web manager (connected to the other node), you can see how things evolve by clicking on the "healthy" node and looking at the scrolling "syslog" tab. It should detect the other node is down, create a new quorum and fence it. If fencing fails, you should re-check your fencing configuration as it MUST work in order to automatically restar machines.

Problems and workarounds

DRBD split-brain

Under some cirumstances, data consistence between both nodes on DRBD partitions can be lost. In this case, the only efficient way to solve this is by manually intervention. The cluster administrator must decide which node data must be preserved and which one is discarded to gain data coherence again. Under DRBD, split-brain situations will probably occur when data connection is lost for longer than a few seconds.

Lets consider a failure scenario where node A is the node where we had the most machines running and thus the one we want to conserve data. Therefore, node B changes made to DRBD partition while the split-brain situation lasted, must be discarded. We consider a Primary/Primary configuration for DRBD. The procedure to follow would be :

- Go to a node B terminal (repeat for each DRBD resource where quorum is lost):

drbdadm secondary [resource name] drbdadm disconnect [resource name] drbdadm -- --discard-my-data connect [resource name]

- Go to a node A terminal (repeat for each DRBD resource where quorum is lost):

drbdadm connect [resource name]

- Go back to node B terminal (repeat for each DRBD resource where quorum is lost):

drbdadm primary [resource name]

Fencing keeps on failing

Fencing MUST work properly when a node fails to have HA working. Therefore, you must ensure that the fencing mechanism is reachable (there exists network connnection) and that it is powered (if for testing purposes you just pulled the power cord, things will go wrong). Therefore, It is strongly encouraged to have network switches and power supplies correctly protected and replicated using SAIs to prevent a lethal blackout that would make HA unusable. What is more, if the HA mechanism fails, you won't be able to manually restart affected VMs on the other host.