The following sections will focus on common virtualization tasks and explain the Proxmox VE specifics regarding the administration and management of the host machine.

Proxmox VE is based on Debian GNU/Linux with additional repositories to provide the Proxmox VE related packages. This means that the full range of Debian packages is available including security updates and bug fixes. Proxmox VE provides its own Linux kernel based on the Ubuntu kernel. It has all the necessary virtualization and container features enabled and includes ZFS and several extra hardware drivers.

For other topics not included in the following sections, please refer to the Debian documentation. The Debian Administrator's Handbook is available online, and provides a comprehensive introduction to the Debian operating system (see [Hertzog13]).

Package Repositories

Proxmox VE uses APT as its package management tool like any other Debian-based system.

Proxmox VE automatically checks for package updates on a daily basis. The root@pam user is notified via email about available updates. From the GUI, the Changelog button can be used to see more details about an selected update.

Repositories in Proxmox VE

Repositories are a collection of software packages, they can be used to install new software, but are also important to get new updates.

|

|

You need valid Debian and Proxmox repositories to get the latest security updates, bug fixes and new features. |

APT Repositories are defined in the file /etc/apt/sources.list in the legacy single-line format and in .sources files placed in /etc/apt/sources.list.d/ for the modern deb822 multi-line format, see Repository Formats for details.

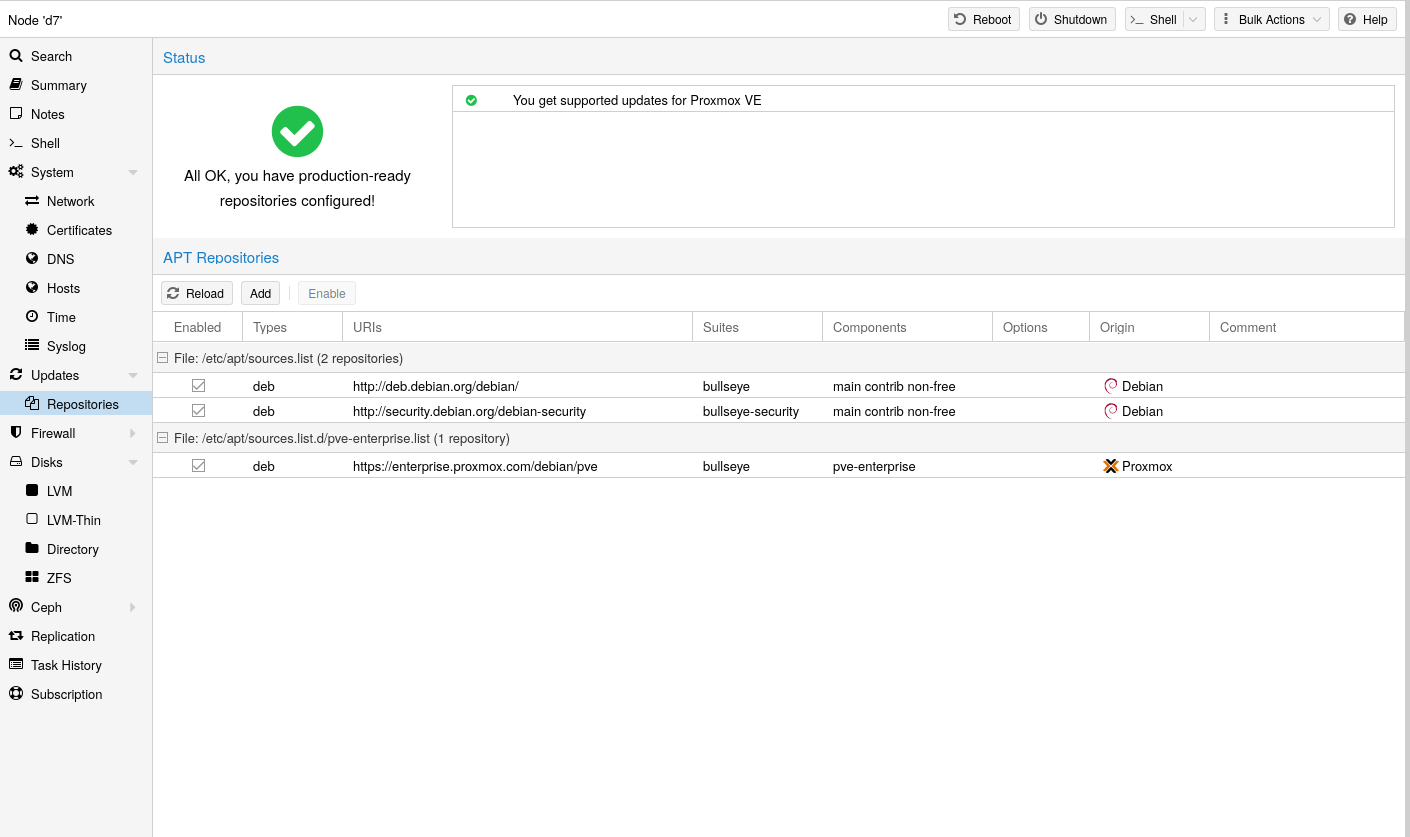

Repository Management

Since Proxmox VE 7, you can check the repository state in the web interface. The node summary panel shows a high level status overview, while the separate Repository panel shows in-depth status and list of all configured repositories.

Basic repository management, for example, activating or deactivating a repository, is also supported.

The available packages from a repository are acquired by running apt update. Updates can be installed directly using apt, or via the GUI (Node → Updates).

Repository Formats

Package repositories can be configured in the source list /etc/apt/sources.list and the files contained in /etc/apt/sources.list.d/.

There are two formats supported:

- single line

-

In a single-line sources.list file, each line defines a package repository. Empty lines are ignored. A # character anywhere on a line marks the remainder of that line as a comment. This is the legacy format. Since Debian 13 Trixie apt will complain about using this format. You can automatically migrate most repositories using the apt modernize-sources command.

- deb822

-

In the multi-line format repo.sources file each entry consists of multiple lines of key-value pairs. A file can include multiple entries by separating each group with a blank line. This is the modern format.

Debian Base Repositories

Types: deb deb-src URIs: http://deb.debian.org/debian/ Suites: trixie trixie-updates Components: main non-free-firmware Signed-By: /usr/share/keyrings/debian-archive-keyring.gpg Types: deb deb-src URIs: http://security.debian.org/debian-security/ Suites: trixie-security Components: main non-free-firmware Signed-By: /usr/share/keyrings/debian-archive-keyring.gpg

Proxmox VE Enterprise Repository

This is the recommended repository and available for all Proxmox VE subscription users. It contains the most stable packages and is suitable for production use. The pve-enterprise repository is enabled by default:

Types: deb URIs: https://enterprise.proxmox.com/debian/pve Suites: trixie Components: pve-enterprise Signed-By: /usr/share/keyrings/proxmox-archive-keyring.gpg

Please note that you need a valid subscription key to access the pve-enterprise repository. We offer different support levels, which you can find further details about at https://proxmox.com/en/proxmox-virtual-environment/pricing.

|

|

You can disable this repository by commenting out the above line using a # (at the start of the line). This prevents error messages if your host does not have a subscription key. Please configure the pve-no-subscription repository in that case. |

Proxmox VE No-Subscription Repository

As the name suggests, you do not need a subscription key to access this repository. It can be used for testing and non-production use. It’s not recommended to use this on production servers, as these packages are not always as heavily tested and validated.

We recommend to configure this repository in /etc/apt/sources.list.d/proxmox.sources.

Types: deb URIs: http://download.proxmox.com/debian/pve Suites: trixie Components: pve-no-subscription Signed-By: /usr/share/keyrings/proxmox-archive-keyring.gpg

|

|

Remember that you will always need the base Debian repositories in addition to a Proxmox VE Proxmox repository |

Proxmox VE Test Repository

This repository contains the latest packages and is primarily used by developers to test new features. To configure it, add the following stanza to the file /etc/apt/sources.list.d/proxmox.sources:

Types: deb URIs: http://download.proxmox.com/debian/pve Suites: trixie Components: pve-test Signed-By: /usr/share/keyrings/proxmox-archive-keyring.gpg

|

|

The pve-test repository should (as the name implies) only be used for testing new features or bug fixes. |

Ceph Repositories

Ceph-related packages are kept up to date with a preconfigured Ceph enterprise repository. Preinstalled packages enable connecting to an external Ceph cluster and adding its RBD or CephFS pools as storage. You can also build a hyper-converged infrastructure (HCI) by running Ceph on top of the Proxmox VE cluster.

Information from this chapter is helpful in the following cases:

- Installing Ceph to build an HCI

-

Decide on a below described repository and recent Ceph release, which you can then select in the web-based wizard or the CLI tool.

- Already running the HCI and want to upgrade to the succeeding Ceph major release

-

Please follow the related Ceph upgrade guide.

- Already running the HCI and want to upgrade to the succeeding Proxmox VE major release

-

In an HCI each Proxmox VE major release requires a corresponding minimum Ceph major release, please follow the related Proxmox VE upgrade guide.

- Not running an HCI but using an external Ceph cluster

-

To install newer packages used to connect to Ceph, apply available system updates, decide on a repository and Ceph release listed below, add it to your node via the Repository panel, apply newly available system updates, verify the result by running ceph --version and disable the old Ceph repository.

To read the latest version of the admin guide for your Proxmox VE release, make sure that all system updates are installed and that this page has been reloaded.

enterprise |

no-subscription |

test |

||

ceph-squid |

2026-09 (v19.2) |

recommended |

available |

available |

The content of the ceph.sources file below serves as a reference (prior to Proxmox VE 9 the file ceph.list was used). To make changes, please follow the case that applies to your situation as described at the beginning of this subchapter. If you have disabled a repository in the web UI and also want to delist it, you can manually remove the corresponding entry from the file.

enterprise

This repository is recommended for production use and contains the most stable package versions. It is accessible if the Proxmox VE node has a valid subscription of any level. For details and included customer support levels visit:

Types: deb URIs: https://enterprise.proxmox.com/debian/ceph-squid Suites: trixie Components: enterprise Signed-By: /usr/share/keyrings/proxmox-archive-keyring.gpg

no-subscription

This repository is suitable for testing and for non-production use. It is freely accessible and does not require a valid subscription. After some time, its package versions are also made available in the enterprise repository.

Types: deb URIs: http://download.proxmox.com/debian/ceph-squid Suites: trixie Components: no-subscription Signed-By: /usr/share/keyrings/proxmox-archive-keyring.gpg

test

This repository contains the latest package versions and is primarily used by developers to test new features and bug fixes.

Types: deb URIs: http://download.proxmox.com/debian/ceph-squid Suites: trixie Components: test Signed-By: /usr/share/keyrings/proxmox-archive-keyring.gpg

|

|

The Ceph test repository should (as the name implies) only be used for testing new features or bug fixes. |

Debian Firmware Repository

Starting with Debian Bookworm (Proxmox VE 8) non-free firmware (as defined by DFSG) has been moved to the newly created Debian repository component non-free-firmware.

Since Proxmox VE 9 this repository is enabled by default for new installations to ensure they can get Early OS Microcode Updates.

You can also acquire need additional Runtime Firmware Files not already included in the pre-installed package pve-firmware.

To be able to install packages from this component, run editor /etc/apt/sources.list, append non-free-firmware to the end of each .debian.org repository line and run apt update.

If you upgraded your Proxmox VE 9 install from a previous version of Proxmox VE and have modernized your package repositories to the new deb822-style, you will need to adapt /etc/apt/sources.list.d/debian.sources instead. Run editor /etc/apt/sources.list.d/debian.sources and add non-free-firmware to the lines starting with Components: of each stanza.

|

|

Modernizing your package repositories is recommended. Otherwise, apt on Debian Trixie will complain. You can run apt modernize-sources to do so. |

SecureApt

The Release files in the repositories are signed with GnuPG. APT is using these signatures to verify that all packages are from a trusted source.

If you install Proxmox VE from an official ISO image, the key for verification is already installed.

If you install Proxmox VE on top of Debian, download and install the key with the following commands:

# wget https://enterprise.proxmox.com/debian/proxmox-archive-keyring-trixie.gpg -O /usr/share/keyrings/proxmox-archive-keyring.gpg

|

|

The wget command above adds the keyring for Proxmox releases based on Debian Trixie. Once the proxmox-archive-keyring package is installed, it will manage this file. At that point, the hashes below may no longer match the hashes of this file, as keys for new Proxmox releases get added or removed. This is intended, apt will ensure that only trusted keys are being used. Modifying this file is discouraged once proxmox-archive-keyring is installed. |

Verify the checksum afterwards with the sha512sum CLI tool:

# sha256sum /usr/share/keyrings/proxmox-archive-keyring.gpg 136673be77aba35dcce385b28737689ad64fd785a797e57897589aed08db6e45 /usr/share/keyrings/proxmox-archive-keyring.gpg

or the md5sum CLI tool:

# md5sum /usr/share/keyrings/proxmox-archive-keyring.gpg 77c8b1166d15ce8350102ab1bca2fcbf /usr/share/keyrings/proxmox-archive-keyring.gpg

|

|

Make sure the path you install the key to matches the Signed-By: lines in your repository stanzas. |

System Software Updates

Proxmox provides updates on a regular basis for all repositories. To install updates use the web-based GUI or the following CLI commands:

# apt-get update # apt-get dist-upgrade

For occasionally upgrading Ceph to its succeeding major release, see Ceph Repositories.

|

|

The APT package management system is very flexible and provides many features, see man apt-get, or [Hertzog13] for additional information. |

|

|

Regular updates are essential to get the latest patches and security related fixes. Major system upgrades are announced in the Proxmox VE Community Forum. |

Firmware Updates

Firmware updates from this chapter should be applied when running Proxmox VE on a bare-metal server. Whether configuring firmware updates is appropriate within guests, e.g. when using device pass-through, depends strongly on your setup and is therefore out of scope.

In addition to regular software updates, firmware updates are also important for reliable and secure operation.

When obtaining and applying firmware updates, a combination of available options is recommended to get them as early as possible or at all.

The term firmware is usually divided linguistically into microcode (for CPUs) and firmware (for other devices).

Persistent Firmware

This section is suitable for all devices. Updated microcode, which is usually included in a BIOS/UEFI update, is stored on the motherboard, whereas other firmware is stored on the respective device. This persistent method is especially important for the CPU, as it enables the earliest possible regular loading of the updated microcode at boot time.

|

|

With some updates, such as for BIOS/UEFI or storage controller, the device configuration could be reset. Please follow the vendor’s instructions carefully and back up the current configuration. |

Please check with your vendor which update methods are available.

-

Convenient update methods for servers can include Dell’s Lifecycle Manager or Service Packs from HPE.

-

Sometimes there are Linux utilities available as well. Examples are mlxup for NVIDIA ConnectX or bnxtnvm/niccli for Broadcom network cards.

-

LVFS is also an option if there is a cooperation with the hardware vendor and supported hardware in use. The technical requirement for this is that the system was manufactured after 2014 and is booted via UEFI.

Proxmox VE ships its own version of the fwupd package to enable Secure Boot Support with the Proxmox signing key. This package consciously dropped the dependency recommendation for the udisks2 package, due to observed issues with its use on hypervisors. That means you must explicitly configure the correct mount point of the EFI partition in /etc/fwupd/daemon.conf, for example:

# Override the location used for the EFI system partition (ESP) path. EspLocation=/boot/efi

|

|

If the update instructions require a host reboot, make sure that it can be done safely. See also Node Maintenance. |

Runtime Firmware Files

This method stores firmware on the Proxmox VE operating system and will pass it to a device if its persisted firmware is less recent. It is supported by devices such as network and graphics cards, but not by those that rely on persisted firmware such as the motherboard and hard disks.

In Proxmox VE the package pve-firmware is already installed by default. Therefore, with the normal system updates (APT), included firmware of common hardware is automatically kept up to date.

An additional Debian Firmware Repository exists, but is not configured by default.

If you try to install an additional firmware package but it conflicts, APT will abort the installation. Perhaps the particular firmware can be obtained in another way.

CPU Microcode Updates

Microcode updates are intended to fix found security vulnerabilities and other serious CPU bugs. While the CPU performance can be affected, a patched microcode is usually still more performant than an unpatched microcode where the kernel itself has to do mitigations. Depending on the CPU type, it is possible that performance results of the flawed factory state can no longer be achieved without knowingly running the CPU in an unsafe state.

To get an overview of present CPU vulnerabilities and their mitigations, run lscpu. Current real-world known vulnerabilities can only show up if the Proxmox VE host is up to date, its version not end of life, and has at least been rebooted since the last kernel update.

Besides the recommended microcode update via persistent BIOS/UEFI updates, there is also an independent method via Early OS Microcode Updates. It is convenient to use and also quite helpful when the motherboard vendor no longer provides BIOS/UEFI updates. Regardless of the method in use, a reboot is always needed to apply a microcode update.

Set up Early OS Microcode Updates

To set up microcode updates that are applied early on boot by the Linux kernel, you need to:

-

Enable the Debian Firmware Repository

-

Get the latest available packages apt update (or use the web interface, under Node → Updates)

-

Install the CPU-vendor specific microcode package:

-

For Intel CPUs: apt install intel-microcode

-

For AMD CPUs: apt install amd64-microcode

-

-

Reboot the Proxmox VE host

Any future microcode update will also require a reboot to be loaded.

Microcode Version

To get the current running microcode revision for comparison or debugging purposes:

# grep microcode /proc/cpuinfo | uniq microcode : 0xf0

A microcode package has updates for many different CPUs. But updates specifically for your CPU might not come often. So, just looking at the date on the package won’t tell you when the company actually released an update for your specific CPU.

If you’ve installed a new microcode package and rebooted your Proxmox VE host, and this new microcode is newer than both, the version baked into the CPU and the one from the motherboard’s firmware, you’ll see a message in the system log saying "microcode updated early".

# dmesg | grep microcode [ 0.000000] microcode: microcode updated early to revision 0xf0, date = 2021-11-12 [ 0.896580] microcode: Microcode Update Driver: v2.2.

Troubleshooting

For debugging purposes, the set up Early OS Microcode Update applied regularly at system boot can be temporarily disabled as follows:

-

make sure that the host can be rebooted safely

-

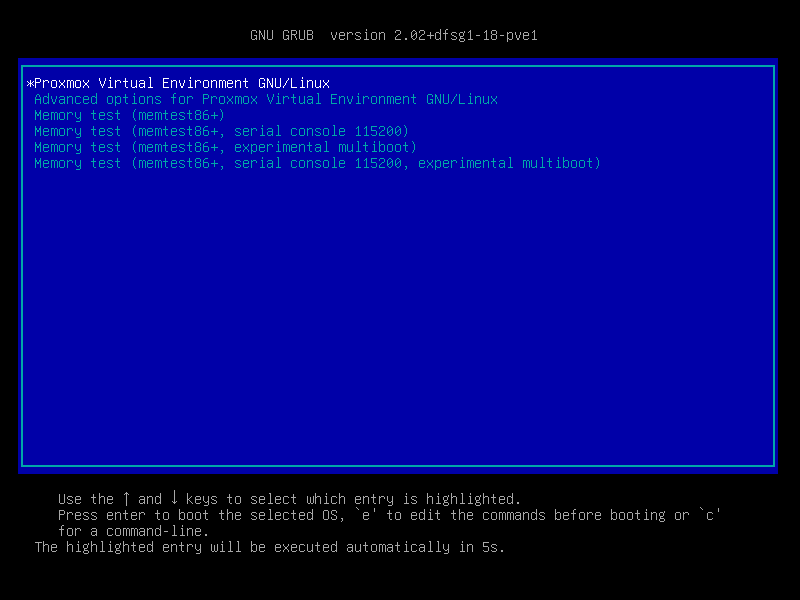

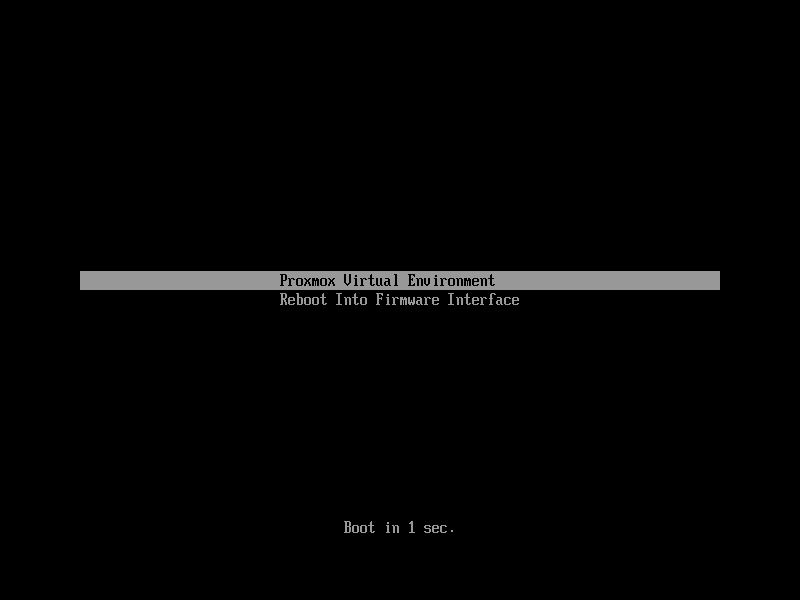

reboot the host to get to the GRUB menu (hold SHIFT if it is hidden)

-

at the desired Proxmox VE boot entry press E

-

go to the line which starts with linux and append separated by a space dis_ucode_ldr

-

press CTRL-X to boot this time without an Early OS Microcode Update

If a problem related to a recent microcode update is suspected, a package downgrade should be considered instead of package removal (apt purge <intel-microcode|amd64-microcode>). Otherwise, a too old persisted microcode might be loaded, even though a more recent one would run without problems.

A downgrade is possible if an earlier microcode package version is available in the Debian repository, as shown in this example:

# apt list -a intel-microcode Listing... Done intel-microcode/stable-security,now 3.20230808.1~deb12u1 amd64 [installed] intel-microcode/stable 3.20230512.1 amd64

# apt install intel-microcode=3.202305* ... Selected version '3.20230512.1' (Debian:12.1/stable [amd64]) for 'intel-microcode' ... dpkg: warning: downgrading intel-microcode from 3.20230808.1~deb12u1 to 3.20230512.1 ... intel-microcode: microcode will be updated at next boot ...

Make sure (again) that the host can be rebooted safely. To apply an older microcode potentially included in the microcode package for your CPU type, reboot now.

|

|

It makes sense to hold the downgraded package for a while and try more recent versions again at a later time. Even if the package version is the same in the future, system updates may have fixed the experienced problem in the meantime. # apt-mark hold intel-microcode intel-microcode set on hold. # apt-mark unhold intel-microcode # apt update # apt full-upgrade |

Network Configuration

Proxmox VE is using the Linux network stack. This provides a lot of flexibility on how to set up the network on the Proxmox VE nodes. The configuration can be done either via the GUI, or by manually editing the file /etc/network/interfaces, which contains the whole network configuration. The interfaces(5) manual page contains the complete format description. All Proxmox VE tools try hard to keep direct user modifications, but using the GUI is still preferable, because it protects you from errors.

A Linux bridge interface (commonly called vmbrX) is needed to connect guests to the underlying physical network. It can be thought of as a virtual switch which the guests and physical interfaces are connected to. This section provides some examples on how the network can be set up to accommodate different use cases like redundancy with a bond, vlans or routed and NAT setups.

The Software Defined Network is an option for more complex virtual networks in Proxmox VE clusters.

|

|

It’s discouraged to use the traditional Debian tools ifup and ifdown if unsure, as they have some pitfalls like interrupting all guest traffic on ifdown vmbrX but not reconnecting those guest again when doing ifup on the same bridge later. |

Apply Network Changes

Proxmox VE does not write changes directly to /etc/network/interfaces. Instead, we write into a temporary file called /etc/network/interfaces.new, this way you can do many related changes at once. This also allows to ensure your changes are correct before applying, as a wrong network configuration may render a node inaccessible.

Live-Reload Network with ifupdown2

With the recommended ifupdown2 package (default for new installations since Proxmox VE 7.0), it is possible to apply network configuration changes without a reboot. If you change the network configuration via the GUI, you can click the Apply Configuration button. This will move changes from the staging interfaces.new file to /etc/network/interfaces and apply them live.

If you made manual changes directly to the /etc/network/interfaces file, you can apply them by running ifreload -a

|

|

If you installed Proxmox VE on top of Debian, or upgraded to Proxmox VE 7.0 from an older Proxmox VE installation, make sure ifupdown2 is installed: apt install ifupdown2 |

Naming Conventions

We currently use the following naming conventions for device names:

-

Ethernet devices: en*, systemd network interface names. This naming scheme is used for new Proxmox VE installations since version 5.0.

-

Ethernet devices: eth[N], where 0 ≤ N (eth0, eth1, …) This naming scheme is used for Proxmox VE hosts which were installed before the 5.0 release. When upgrading to 5.0, the names are kept as-is.

-

Bridge names: Commonly vmbr[N], where 0 ≤ N ≤ 4094 (vmbr0 - vmbr4094), but you can use any alphanumeric string that starts with a character and is at most 10 characters long.

-

Bonds: bond[N], where 0 ≤ N (bond0, bond1, …)

-

VLANs: Simply add the VLAN number to the device name, separated by a period (eno1.50, bond1.30)

This makes it easier to debug networks problems, because the device name implies the device type.

Systemd Network Interface Names

Systemd defines a versioned naming scheme for network device names. The scheme uses the two-character prefix en for Ethernet network devices. The next characters depends on the device driver, device location and other attributes. Some possible patterns are:

-

o<index>[n<phys_port_name>|d<dev_port>] — devices on board

-

s<slot>[f<function>][n<phys_port_name>|d<dev_port>] — devices by hotplug id

-

[P<domain>]p<bus>s<slot>[f<function>][n<phys_port_name>|d<dev_port>] — devices by bus id

-

x<MAC> — devices by MAC address

Some examples for the most common patterns are:

-

eno1 — is the first on-board NIC

-

enp3s0f1 — is function 1 of the NIC on PCI bus 3, slot 0

For a full list of possible device name patterns, see the systemd.net-naming-scheme(7) manpage.

A new version of systemd may define a new version of the network device naming scheme, which it then uses by default. Consequently, updating to a newer systemd version, for example during a major Proxmox VE upgrade, can change the names of network devices and require adjusting the network configuration. To avoid name changes due to a new version of the naming scheme, you can manually pin a particular naming scheme version (see below).

However, even with a pinned naming scheme version, network device names can still change due to kernel or driver updates. In order to avoid name changes for a particular network device altogether, you can manually override its name using a link file (see below).

For more information on network interface names, see Predictable Network Interface Names.

Pinning a specific naming scheme version

You can pin a specific version of the naming scheme for network devices by adding the net.naming-scheme=<version> parameter to the kernel command line. For a list of naming scheme versions, see the systemd.net-naming-scheme(7) manpage.

For example, to pin the version v252, which is the latest naming scheme version for a fresh Proxmox VE 8.0 installation, add the following kernel command-line parameter:

net.naming-scheme=v252

See also this section on editing the kernel command line. You need to reboot for the changes to take effect.

Overriding network device names

Using the pve-network-interface-pinning Tool

Proxmox VE provides a tool for automatically generating .link files for overriding the name of network devices. It also automatically replaces the occurences of the old interface name in the following files:

-

/etc/network/interfaces

-

/etc/pve/nodes/<nodename>/host.fw

-

/etc/pve/sdn/controllers.cfg

-

/etc/pve/sdn/fabrics.cfg

|

|

Since the generated mapping is local to the node it is generated on, interface names contained in the Firewall Datacenter configuration (/etc/pve/firewall/cluster.fw) are not automatically updated. |

The generated link files are stored in /usr/local/lib/systemd/network. For the configuration files a new file will be generated in the same place with a .new suffix. This way you can inspect the changes made to the configuration by using diff (or another diff viewer of your choice):

diff -y /etc/network/interfaces /etc/network/interfaces.new

If you see any problematic changes or want to revert the changes made by the pinning tool before rebooting, simply delete all .new files and the respective link files from /usr/local/lib/systemd/network.

The following command will generate a .link file for all physical network interfaces that do not yet have a .link file and update selected Proxmox VE configuration files (see above). The generated names will use the default prefix nic, so the resulting interface names will be nic1, nic2, …

pve-network-interface-pinning generate

You can override the default prefix with the --prefix flag:

pve-network-interface-pinning generate --prefix myprefix

It is also possible to pin only a specific interface:

pve-network-interface-pinning generate --interface enp1s0

When pinning a specific interface, you can specify the exact name that the interface should be pinned to:

pve-network-interface-pinning generate --interface enp1s0 --target-name if42

In order to apply the changes made by pve-network-interface-pinning to the network configuration, the node needs to be rebooted.

Manual method

You can manually assign a name to a particular network device using a custom systemd.link file. This overrides the name that would be assigned according to the latest network device naming scheme. This way, you can avoid naming changes due to kernel updates, driver updates or newer versions of the naming scheme.

Custom link files should be placed in /etc/systemd/network/ and named <n>-<id>.link, where n is a priority smaller than 99 and id is some identifier. A link file has two sections: [Match] determines which interfaces the file will apply to; [Link] determines how these interfaces should be configured, including their naming.

To assign a name to a particular network device, you need a way to uniquely and permanently identify that device in the [Match] section. One possibility is to match the device’s MAC address using the MACAddress option, as it is unlikely to change.

The [Match] section should also contain a Type option to make sure it only matches the expected physical interface, and not bridge/bond/VLAN interfaces with the same MAC address. In most setups, Type should be set to ether to match only Ethernet devices, but some setups may require other choices. See the systemd.link(5) manpage for more details.

Then, you can assign a name using the Name option in the [Link] section.

Link files are copied to the initramfs, so it is recommended to refresh the initramfs after adding, modifying, or removing a link file:

# update-initramfs -u -k all

For example, to assign the name enwan0 to the Ethernet device with MAC address aa:bb:cc:dd:ee:ff, create a file /etc/systemd/network/10-enwan0.link with the following contents:

[Match] MACAddress=aa:bb:cc:dd:ee:ff Type=ether [Link] Name=enwan0

Do not forget to adjust /etc/network/interfaces to use the new name, and refresh your initramfs as described above. You need to reboot the node for the change to take effect.

|

|

It is recommended to assign a name starting with en or eth so that Proxmox VE recognizes the interface as a physical network device which can then be configured via the GUI. Also, you should ensure that the name will not clash with other interface names in the future. One possibility is to assign a name that does not match any name pattern that systemd uses for network interfaces (see above), such as enwan0 in the example above. |

For more information on link files, see the systemd.link(5) manpage.

Choosing a network configuration

Depending on your current network organization and your resources you can choose either a bridged, routed, or masquerading networking setup.

Proxmox VE server in a private LAN, using an external gateway to reach the internet

The Bridged model makes the most sense in this case, and this is also the default mode on new Proxmox VE installations. Each of your Guest system will have a virtual interface attached to the Proxmox VE bridge. This is similar in effect to having the Guest network card directly connected to a new switch on your LAN, the Proxmox VE host playing the role of the switch.

Proxmox VE server at hosting provider, with public IP ranges for Guests

For this setup, you can use either a Bridged or Routed model, depending on what your provider allows.

Proxmox VE server at hosting provider, with a single public IP address

In that case the only way to get outgoing network accesses for your guest systems is to use Masquerading. For incoming network access to your guests, you will need to configure Port Forwarding.

For further flexibility, you can configure VLANs (IEEE 802.1q) and network bonding, also known as "link aggregation". That way it is possible to build complex and flexible virtual networks.

Default Configuration using a Bridge

Bridges are like physical network switches implemented in software. All virtual guests can share a single bridge, or you can create multiple bridges to separate network domains. Each host can have up to 4094 bridges.

The installation program creates a single bridge named vmbr0, which is connected to the first Ethernet card. The corresponding configuration in /etc/network/interfaces might look like this:

auto lo

iface lo inet loopback

iface eno1 inet manual

auto vmbr0

iface vmbr0 inet static

address 192.168.10.2/24

gateway 192.168.10.1

bridge-ports eno1

bridge-stp off

bridge-fd 0

Virtual machines behave as if they were directly connected to the physical network. The network, in turn, sees each virtual machine as having its own MAC, even though there is only one network cable connecting all of these VMs to the network.

Routed Configuration

Most hosting providers do not support the above setup. For security reasons, they disable networking as soon as they detect multiple MAC addresses on a single interface.

|

|

Some providers allow you to register additional MACs through their management interface. This avoids the problem, but can be clumsy to configure because you need to register a MAC for each of your VMs. |

You can avoid the problem by “routing” all traffic via a single interface. This makes sure that all network packets use the same MAC address.

A common scenario is that you have a public IP (assume 198.51.100.5 for this example), and an additional IP block for your VMs (203.0.113.16/28). We recommend the following setup for such situations:

auto lo

iface lo inet loopback

auto eno0

iface eno0 inet static

address 198.51.100.5/29

gateway 198.51.100.1

post-up echo 1 > /proc/sys/net/ipv4/ip_forward

post-up echo 1 > /proc/sys/net/ipv4/conf/eno0/proxy_arp

auto vmbr0

iface vmbr0 inet static

address 203.0.113.17/28

bridge-ports none

bridge-stp off

bridge-fd 0

Masquerading (NAT) with iptables

Masquerading allows guests having only a private IP address to access the network by using the host IP address for outgoing traffic. Each outgoing packet is rewritten by iptables to appear as originating from the host, and responses are rewritten accordingly to be routed to the original sender.

auto lo

iface lo inet loopback

auto eno1

#real IP address

iface eno1 inet static

address 198.51.100.5/24

gateway 198.51.100.1

auto vmbr0

#private sub network

iface vmbr0 inet static

address 10.10.10.1/24

bridge-ports none

bridge-stp off

bridge-fd 0

post-up echo 1 > /proc/sys/net/ipv4/ip_forward

post-up iptables -t nat -A POSTROUTING -s '10.10.10.0/24' -o eno1 -j MASQUERADE

post-down iptables -t nat -D POSTROUTING -s '10.10.10.0/24' -o eno1 -j MASQUERADE

|

|

In some masquerade setups with firewall enabled, conntrack zones might be needed for outgoing connections. Otherwise the firewall could block outgoing connections since they will prefer the POSTROUTING of the VM bridge (and not MASQUERADE). |

Adding these lines in the /etc/network/interfaces can fix this problem:

post-up iptables -t raw -I PREROUTING -i fwbr+ -j CT --zone 1 post-down iptables -t raw -D PREROUTING -i fwbr+ -j CT --zone 1

For more information about this, refer to the following links:

Linux Bond

Bonding (also called NIC teaming or Link Aggregation) is a technique for binding multiple NIC’s to a single network device. It is possible to achieve different goals, like make the network fault-tolerant, increase the performance or both together.

High-speed hardware like Fibre Channel and the associated switching hardware can be quite expensive. By doing link aggregation, two NICs can appear as one logical interface, resulting in double speed. This is a native Linux kernel feature that is supported by most switches. If your nodes have multiple Ethernet ports, you can distribute your points of failure by running network cables to different switches and the bonded connection will failover to one cable or the other in case of network trouble.

Aggregated links can improve live-migration delays and improve the speed of replication of data between Proxmox VE Cluster nodes.

There are 7 modes for bonding:

-

Round-robin (balance-rr): Transmit network packets in sequential order from the first available network interface (NIC) slave through the last. This mode provides load balancing and fault tolerance.

-

Active-backup (active-backup): Only one NIC slave in the bond is active. A different slave becomes active if, and only if, the active slave fails. The single logical bonded interface’s MAC address is externally visible on only one NIC (port) to avoid distortion in the network switch. This mode provides fault tolerance.

-

XOR (balance-xor): Transmit network packets based on [(source MAC address XOR’d with destination MAC address) modulo NIC slave count]. This selects the same NIC slave for each destination MAC address. This mode provides load balancing and fault tolerance.

-

Broadcast (broadcast): Transmit network packets on all slave network interfaces. This mode provides fault tolerance.

-

IEEE 802.3ad Dynamic link aggregation (802.3ad)(LACP): Creates aggregation groups that share the same speed and duplex settings. Utilizes all slave network interfaces in the active aggregator group according to the 802.3ad specification.

-

Adaptive transmit load balancing (balance-tlb): Linux bonding driver mode that does not require any special network-switch support. The outgoing network packet traffic is distributed according to the current load (computed relative to the speed) on each network interface slave. Incoming traffic is received by one currently designated slave network interface. If this receiving slave fails, another slave takes over the MAC address of the failed receiving slave.

-

Adaptive load balancing (balance-alb): Includes balance-tlb plus receive load balancing (rlb) for IPV4 traffic, and does not require any special network switch support. The receive load balancing is achieved by ARP negotiation. The bonding driver intercepts the ARP Replies sent by the local system on their way out and overwrites the source hardware address with the unique hardware address of one of the NIC slaves in the single logical bonded interface such that different network-peers use different MAC addresses for their network packet traffic.

If your switch supports the LACP (IEEE 802.3ad) protocol, then we recommend using the corresponding bonding mode (802.3ad). Otherwise you should generally use the active-backup mode.

For the cluster network (Corosync) we recommend configuring it with multiple networks. Corosync does not need a bond for network redundancy as it can switch between networks by itself, if one becomes unusable. Some bond modes are known to be problematic for Corosync, see Corosync over Bonds.

The following bond configuration can be used as distributed/shared storage network. The benefit would be that you get more speed and the network will be fault-tolerant.

auto lo

iface lo inet loopback

iface eno1 inet manual

iface eno2 inet manual

iface eno3 inet manual

auto bond0

iface bond0 inet static

bond-slaves eno1 eno2

address 192.168.1.2/24

bond-miimon 100

bond-mode 802.3ad

bond-xmit-hash-policy layer2+3

auto vmbr0

iface vmbr0 inet static

address 10.10.10.2/24

gateway 10.10.10.1

bridge-ports eno3

bridge-stp off

bridge-fd 0

Another possibility is to use the bond directly as the bridge port. This can be used to make the guest network fault-tolerant.

auto lo

iface lo inet loopback

iface eno1 inet manual

iface eno2 inet manual

auto bond0

iface bond0 inet manual

bond-slaves eno1 eno2

bond-miimon 100

bond-mode 802.3ad

bond-xmit-hash-policy layer2+3

auto vmbr0

iface vmbr0 inet static

address 10.10.10.2/24

gateway 10.10.10.1

bridge-ports bond0

bridge-stp off

bridge-fd 0

VLAN 802.1Q

A virtual LAN (VLAN) is a broadcast domain that is partitioned and isolated in the network at layer two. So it is possible to have multiple networks (4096) in a physical network, each independent of the other ones.

Each VLAN network is identified by a number often called tag. Network packages are then tagged to identify which virtual network they belong to.

VLAN for Guest Networks

Proxmox VE supports this setup out of the box. You can specify the VLAN tag when you create a VM. The VLAN tag is part of the guest network configuration. The networking layer supports different modes to implement VLANs, depending on the bridge configuration:

-

VLAN awareness on the Linux bridge: In this case, each guest’s virtual network card is assigned to a VLAN tag, which is transparently supported by the Linux bridge. Trunk mode is also possible, but that makes configuration in the guest necessary.

-

"traditional" VLAN on the Linux bridge: In contrast to the VLAN awareness method, this method is not transparent and creates a VLAN device with associated bridge for each VLAN. That is, creating a guest on VLAN 5 for example, would create two interfaces eno1.5 and vmbr0v5, which would remain until a reboot occurs.

-

Open vSwitch VLAN: This mode uses the OVS VLAN feature.

-

Guest configured VLAN: VLANs are assigned inside the guest. In this case, the setup is completely done inside the guest and can not be influenced from the outside. The benefit is that you can use more than one VLAN on a single virtual NIC.

VLAN on the Host

To allow host communication with an isolated network. It is possible to apply VLAN tags to any network device (NIC, Bond, Bridge). In general, you should configure the VLAN on the interface with the least abstraction layers between itself and the physical NIC.

For example, in a default configuration where you want to place the host management address on a separate VLAN.

auto lo

iface lo inet loopback

iface eno1 inet manual

iface eno1.5 inet manual

auto vmbr0v5

iface vmbr0v5 inet static

address 10.10.10.2/24

gateway 10.10.10.1

bridge-ports eno1.5

bridge-stp off

bridge-fd 0

auto vmbr0

iface vmbr0 inet manual

bridge-ports eno1

bridge-stp off

bridge-fd 0

auto lo

iface lo inet loopback

iface eno1 inet manual

auto vmbr0.5

iface vmbr0.5 inet static

address 10.10.10.2/24

gateway 10.10.10.1

auto vmbr0

iface vmbr0 inet manual

bridge-ports eno1

bridge-stp off

bridge-fd 0

bridge-vlan-aware yes

bridge-vids 2-4094

The next example is the same setup but a bond is used to make this network fail-safe.

auto lo

iface lo inet loopback

iface eno1 inet manual

iface eno2 inet manual

auto bond0

iface bond0 inet manual

bond-slaves eno1 eno2

bond-miimon 100

bond-mode 802.3ad

bond-xmit-hash-policy layer2+3

iface bond0.5 inet manual

auto vmbr0v5

iface vmbr0v5 inet static

address 10.10.10.2/24

gateway 10.10.10.1

bridge-ports bond0.5

bridge-stp off

bridge-fd 0

auto vmbr0

iface vmbr0 inet manual

bridge-ports bond0

bridge-stp off

bridge-fd 0

Disabling IPv6 on the Node

Proxmox VE works correctly in all environments, irrespective of whether IPv6 is deployed or not. We recommend leaving all settings at the provided defaults.

Should you still need to disable support for IPv6 on your node, do so by creating an appropriate sysctl.conf (5) snippet file and setting the proper sysctls, for example adding /etc/sysctl.d/disable-ipv6.conf with content:

net.ipv6.conf.all.disable_ipv6 = 1 net.ipv6.conf.default.disable_ipv6 = 1

This method is preferred to disabling the loading of the IPv6 module on the kernel commandline.

Disabling MAC Learning on a Bridge

By default, MAC learning is enabled on a bridge to ensure a smooth experience with virtual guests and their networks.

But in some environments this can be undesired. Since Proxmox VE 7.3 you can disable MAC learning on the bridge by setting the ‘bridge-disable-mac-learning 1` configuration on a bridge in `/etc/network/interfaces’, for example:

# ...

auto vmbr0

iface vmbr0 inet static

address 10.10.10.2/24

gateway 10.10.10.1

bridge-ports ens18

bridge-stp off

bridge-fd 0

bridge-disable-mac-learning 1

Once enabled, Proxmox VE will manually add the configured MAC address from VMs and Containers to the bridges forwarding database to ensure that guest can still use the network - but only when they are using their actual MAC address.

Time Synchronization

The Proxmox VE cluster stack itself relies heavily on the fact that all the nodes have precisely synchronized time. Some other components, like Ceph, also won’t work properly if the local time on all nodes is not in sync.

Time synchronization between nodes can be achieved using the “Network Time Protocol” (NTP). As of Proxmox VE 7, chrony is used as the default NTP daemon, while Proxmox VE 6 uses systemd-timesyncd. Both come preconfigured to use a set of public servers.

|

|

If you upgrade your system to Proxmox VE 7, it is recommended that you manually install either chrony, ntp or openntpd. |

Using Custom NTP Servers

In some cases, it might be desired to use non-default NTP servers. For example, if your Proxmox VE nodes do not have access to the public internet due to restrictive firewall rules, you need to set up local NTP servers and tell the NTP daemon to use them.

For systems using chrony:

Specify which servers chrony should use in /etc/chrony/chrony.conf:

server ntp1.example.com iburst server ntp2.example.com iburst server ntp3.example.com iburst

Restart chrony:

# systemctl restart chronyd

Check the journal to confirm that the newly configured NTP servers are being used:

# journalctl --since -1h -u chrony

... Aug 26 13:00:09 node1 systemd[1]: Started chrony, an NTP client/server. Aug 26 13:00:15 node1 chronyd[4873]: Selected source 10.0.0.1 (ntp1.example.com) Aug 26 13:00:15 node1 chronyd[4873]: System clock TAI offset set to 37 seconds ...

For systems using systemd-timesyncd:

Specify which servers systemd-timesyncd should use in /etc/systemd/timesyncd.conf:

[Time] NTP=ntp1.example.com ntp2.example.com ntp3.example.com ntp4.example.com

Then, restart the synchronization service (systemctl restart systemd-timesyncd), and verify that your newly configured NTP servers are in use by checking the journal (journalctl --since -1h -u systemd-timesyncd):

... Oct 07 14:58:36 node1 systemd[1]: Stopping Network Time Synchronization... Oct 07 14:58:36 node1 systemd[1]: Starting Network Time Synchronization... Oct 07 14:58:36 node1 systemd[1]: Started Network Time Synchronization. Oct 07 14:58:36 node1 systemd-timesyncd[13514]: Using NTP server 10.0.0.1:123 (ntp1.example.com). Oct 07 14:58:36 node1 systemd-timesyncd[13514]: interval/delta/delay/jitter/drift 64s/-0.002s/0.020s/0.000s/-31ppm ...

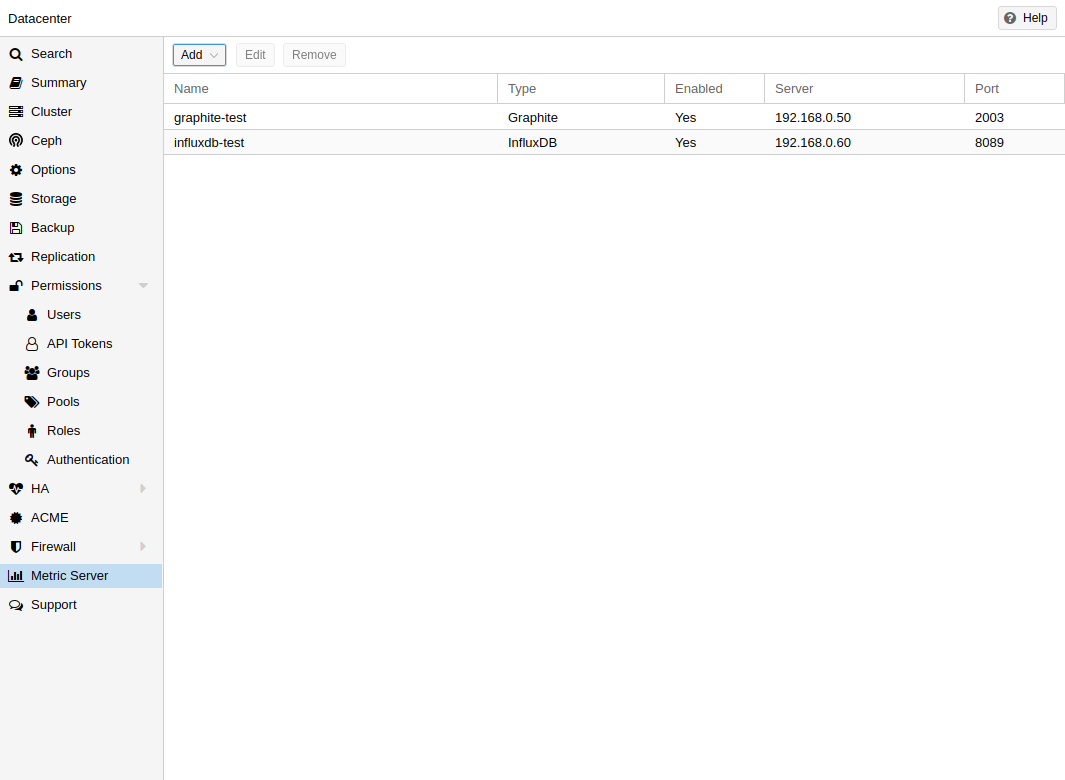

External Metric Server

In Proxmox VE, you can define external metric servers, which will periodically receive various stats about your hosts, virtual guests and storages.

Currently supported are:

-

Graphite (see https://graphiteapp.org )

-

InfluxDB (see https://www.influxdata.com/time-series-platform/influxdb/ )

The external metric server definitions are saved in /etc/pve/status.cfg, and can be edited through the web interface.

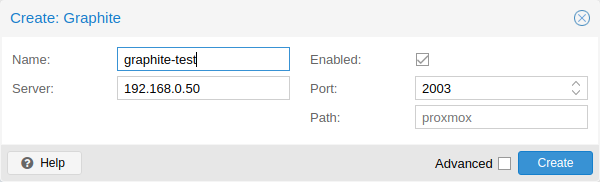

Graphite server configuration

By default, Proxmox VE sends the data over UDP, so the graphite server has to be configured to accept this. Here the maximum transmission unit (MTU) can be configured for environments not using the standard 1500 MTU.

You can also configure the plugin to use TCP. In order not to block the important pvestatd statistic collection daemon, a timeout is required to cope with network problems.

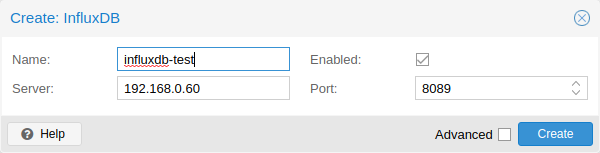

Influxdb plugin configuration

Proxmox VE sends the data over UDP, so the influxdb server has to be configured for this. The MTU can also be configured here, if necessary.

Here is an example configuration for influxdb (on your influxdb server):

[[udp]] enabled = true bind-address = "0.0.0.0:8089" database = "proxmox" batch-size = 1000 batch-timeout = "1s"

With this configuration, your server listens on all IP addresses on port 8089, and writes the data in the proxmox database

Alternatively, the plugin can be configured to use the http(s) API of InfluxDB 2.x. InfluxDB 1.8.x does contain a forwards compatible API endpoint for this v2 API.

To use it, set influxdbproto to http or https (depending on your configuration). By default, Proxmox VE uses the organization proxmox and the bucket/db proxmox (They can be set with the configuration organization and bucket respectively).

Since InfluxDB’s v2 API is only available with authentication, you have to generate a token that can write into the correct bucket and set it.

In the v2 compatible API of 1.8.x, you can use user:password as token (if required), and can omit the organization since that has no meaning in InfluxDB 1.x.

You can also set the HTTP Timeout (default is 1s) with the timeout setting, as well as the maximum batch size (default 25000000 bytes) with the max-body-size setting (this corresponds to the InfluxDB setting with the same name).

Disk Health Monitoring

Although a robust and redundant storage is recommended, it can be very helpful to monitor the health of your local disks.

Starting with Proxmox VE 4.3, the package smartmontools [1] is installed and required. This is a set of tools to monitor and control the S.M.A.R.T. system for local hard disks.

You can get the status of a disk by issuing the following command:

# smartctl -a /dev/sdX

where /dev/sdX is the path to one of your local disks.

If the output says:

SMART support is: Disabled

you can enable it with the command:

# smartctl -s on /dev/sdX

For more information on how to use smartctl, please see man smartctl.

By default, the smartmontools daemon smartd is active and enabled, and scans any devices matching

-

/dev/sd[a-z]

-

/dev/sd[a-z][a-z]

-

/dev/hd[a-t]

-

or /dev/nvme[0-99]

every 30 minutes for errors and warnings, and sends an e-mail to root if it detects a problem.

For more information about how to configure smartd, please see man smartd and man smartd.conf.

If you use your hard disks with a hardware raid controller, there are most likely tools to monitor the disks in the raid array and the array itself. For more information about this, please refer to the vendor of your raid controller.

Logical Volume Manager (LVM)

Most people install Proxmox VE directly on a local disk. The Proxmox VE installation CD offers several options for local disk management, and the current default setup uses LVM. The installer lets you select a single disk for such setup, and uses that disk as physical volume for the Volume Group (VG) pve. The following output is from a test installation using a small 8GB disk:

# pvs PV VG Fmt Attr PSize PFree /dev/sda3 pve lvm2 a-- 7.87g 876.00m # vgs VG #PV #LV #SN Attr VSize VFree pve 1 3 0 wz--n- 7.87g 876.00m

The installer allocates three Logical Volumes (LV) inside this VG:

# lvs LV VG Attr LSize Pool Origin Data% Meta% data pve twi-a-tz-- 4.38g 0.00 0.63 root pve -wi-ao---- 1.75g swap pve -wi-ao---- 896.00m

- root

-

Formatted as ext4, and contains the operating system.

- swap

-

Swap partition

- data

-

This volume uses LVM-thin, and is used to store VM images. LVM-thin is preferable for this task, because it offers efficient support for snapshots and clones.

For Proxmox VE versions up to 4.1, the installer creates a standard logical volume called “data”, which is mounted at /var/lib/vz.

Starting from version 4.2, the logical volume “data” is a LVM-thin pool, used to store block based guest images, and /var/lib/vz is simply a directory on the root file system.

Hardware

We highly recommend to use a hardware RAID controller (with BBU) for such setups. This increases performance, provides redundancy, and make disk replacements easier (hot-pluggable).

LVM itself does not need any special hardware, and memory requirements are very low.

Bootloader

We install two boot loaders by default. The first partition contains the standard GRUB boot loader. The second partition is an EFI System Partition (ESP), which makes it possible to boot on EFI systems and to apply persistent firmware updates from the user space.

Creating a Volume Group

Let’s assume we have an empty disk /dev/sdb, onto which we want to create a volume group named “vmdata”.

|

|

Please note that the following commands will destroy all existing data on /dev/sdb. |

First create a partition.

# sgdisk -N 1 /dev/sdb

Create a Physical Volume (PV) without confirmation and 250K metadatasize.

# pvcreate --metadatasize 250k -y -ff /dev/sdb1

Create a volume group named “vmdata” on /dev/sdb1

# vgcreate vmdata /dev/sdb1

Creating an extra LV for /var/lib/vz

This can be easily done by creating a new thin LV.

# lvcreate -n <Name> -V <Size[M,G,T]> <VG>/<LVThin_pool>

A real world example:

# lvcreate -n vz -V 10G pve/data

Now a filesystem must be created on the LV.

# mkfs.ext4 /dev/pve/vz

At last this has to be mounted.

|

|

be sure that /var/lib/vz is empty. On a default installation it’s not. |

To make it always accessible add the following line in /etc/fstab.

# echo '/dev/pve/vz /var/lib/vz ext4 defaults 0 2' >> /etc/fstab

ZFS on Linux

ZFS is a combined file system and logical volume manager designed by Sun Microsystems. Starting with Proxmox VE 3.4, the native Linux kernel port of the ZFS file system is introduced as optional file system and also as an additional selection for the root file system. There is no need for manually compile ZFS modules - all packages are included.

By using ZFS, its possible to achieve maximum enterprise features with low budget hardware, but also high performance systems by leveraging SSD caching or even SSD only setups. ZFS can replace cost intense hardware raid cards by moderate CPU and memory load combined with easy management.

-

Easy configuration and management with Proxmox VE GUI and CLI.

-

Reliable

-

Protection against data corruption

-

Data compression on file system level

-

Snapshots

-

Copy-on-write clone

-

Various raid levels: RAID0, RAID1, RAID10, RAIDZ-1, RAIDZ-2, RAIDZ-3, dRAID, dRAID2, dRAID3

-

Can use SSD for cache

-

Self healing

-

Continuous integrity checking

-

Designed for high storage capacities

-

Asynchronous replication over network

-

Open Source

-

Encryption

-

…

Hardware

ZFS depends heavily on memory, so you need at least 8GB to start. In practice, use as much as you can get for your hardware/budget. To prevent data corruption, we recommend the use of high quality ECC RAM.

If you use a dedicated cache and/or log disk, you should use an enterprise class SSD. This can increase the overall performance significantly.

|

|

Do not use ZFS on top of a hardware RAID controller which has its own cache management. ZFS needs to communicate directly with the disks. An HBA adapter or something like an LSI controller flashed in “IT” mode is more appropriate. |

If you are experimenting with an installation of Proxmox VE inside a VM (Nested Virtualization), don’t use virtio for disks of that VM, as they are not supported by ZFS. Use IDE or SCSI instead (also works with the virtio SCSI controller type).

Installation as Root File System

When you install using the Proxmox VE installer, you can choose ZFS for the root file system. You need to select the RAID type at installation time:

|

RAID0

|

Also called “striping”. The capacity of such volume is the sum of the capacities of all disks. But RAID0 does not add any redundancy, so the failure of a single drive makes the volume unusable. |

|

RAID1

|

Also called “mirroring”. Data is written identically to all disks. This mode requires at least 2 disks with the same size. The resulting capacity is that of a single disk. |

|

RAID10

|

A combination of RAID0 and RAID1. Requires at least 4 disks. |

|

RAIDZ-1

|

A variation on RAID-5, single parity. Requires at least 3 disks. |

|

RAIDZ-2

|

A variation on RAID-5, double parity. Requires at least 4 disks. |

|

RAIDZ-3

|

A variation on RAID-5, triple parity. Requires at least 5 disks. |

The installer automatically partitions the disks, creates a ZFS pool called rpool, and installs the root file system on the ZFS subvolume rpool/ROOT/pve-1.

Another subvolume called rpool/data is created to store VM images. In order to use that with the Proxmox VE tools, the installer creates the following configuration entry in /etc/pve/storage.cfg:

zfspool: local-zfs

pool rpool/data

sparse

content images,rootdir

After installation, you can view your ZFS pool status using the zpool command:

# zpool status

pool: rpool

state: ONLINE

scan: none requested

config:

NAME STATE READ WRITE CKSUM

rpool ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

sda2 ONLINE 0 0 0

sdb2 ONLINE 0 0 0

mirror-1 ONLINE 0 0 0

sdc ONLINE 0 0 0

sdd ONLINE 0 0 0

errors: No known data errors

The zfs command is used to configure and manage your ZFS file systems. The following command lists all file systems after installation:

# zfs list NAME USED AVAIL REFER MOUNTPOINT rpool 4.94G 7.68T 96K /rpool rpool/ROOT 702M 7.68T 96K /rpool/ROOT rpool/ROOT/pve-1 702M 7.68T 702M / rpool/data 96K 7.68T 96K /rpool/data rpool/swap 4.25G 7.69T 64K -

ZFS RAID Level Considerations

There are a few factors to take into consideration when choosing the layout of a ZFS pool. The basic building block of a ZFS pool is the virtual device, or vdev. All vdevs in a pool are used equally and the data is striped among them (RAID0). Check the zpoolconcepts(7) manpage for more details on vdevs.

Performance

Each vdev type has different performance behaviors. The two parameters of interest are the IOPS (Input/Output Operations per Second) and the bandwidth with which data can be written or read.

A mirror vdev (RAID1) will approximately behave like a single disk in regard to both parameters when writing data. When reading data the performance will scale linearly with the number of disks in the mirror.

A common situation is to have 4 disks. When setting it up as 2 mirror vdevs (RAID10) the pool will have the write characteristics as two single disks in regard to IOPS and bandwidth. For read operations it will resemble 4 single disks.

A RAIDZ of any redundancy level will approximately behave like a single disk in regard to IOPS with a lot of bandwidth. How much bandwidth depends on the size of the RAIDZ vdev and the redundancy level.

A dRAID pool should match the performance of an equivalent RAIDZ pool.

For running VMs, IOPS is the more important metric in most situations.

Size, Space usage and Redundancy

While a pool made of mirror vdevs will have the best performance characteristics, the usable space will be 50% of the disks available. Less if a mirror vdev consists of more than 2 disks, for example in a 3-way mirror. At least one healthy disk per mirror is needed for the pool to stay functional.

The usable space of a RAIDZ type vdev of N disks is roughly N-P, with P being the RAIDZ-level. The RAIDZ-level indicates how many arbitrary disks can fail without losing data. A special case is a 4 disk pool with RAIDZ2. In this situation it is usually better to use 2 mirror vdevs for the better performance as the usable space will be the same.

Another important factor when using any RAIDZ level is how ZVOL datasets, which are used for VM disks, behave. For each data block the pool needs parity data which is at least the size of the minimum block size defined by the ashift value of the pool. With an ashift of 12 the block size of the pool is 4k. The default block size for a ZVOL is 8k. Therefore, in a RAIDZ2 each 8k block written will cause two additional 4k parity blocks to be written, 8k + 4k + 4k = 16k. This is of course a simplified approach and the real situation will be slightly different with metadata, compression and such not being accounted for in this example.

This behavior can be observed when checking the following properties of the ZVOL:

-

volsize

-

refreservation (if the pool is not thin provisioned)

-

used (if the pool is thin provisioned and without snapshots present)

# zfs get volsize,refreservation,used <pool>/vm-<vmid>-disk-X

volsize is the size of the disk as it is presented to the VM, while refreservation shows the reserved space on the pool which includes the expected space needed for the parity data. If the pool is thin provisioned, the refreservation will be set to 0. Another way to observe the behavior is to compare the used disk space within the VM and the used property. Be aware that snapshots will skew the value.

There are a few options to counter the increased use of space:

-

Increase the volblocksize to improve the data to parity ratio

-

Use mirror vdevs instead of RAIDZ

-

Use ashift=9 (block size of 512 bytes)

The volblocksize property can only be set when creating a ZVOL. The default value can be changed in the storage configuration. When doing this, the guest needs to be tuned accordingly and depending on the use case, the problem of write amplification is just moved from the ZFS layer up to the guest.

Using ashift=9 when creating the pool can lead to bad performance, depending on the disks underneath, and cannot be changed later on.

Mirror vdevs (RAID1, RAID10) have favorable behavior for VM workloads. Use them, unless your environment has specific needs and characteristics where RAIDZ performance characteristics are acceptable.

ZFS dRAID

In a ZFS dRAID (declustered RAID) the hot spare drive(s) participate in the RAID. Their spare capacity is reserved and used for rebuilding when one drive fails. This provides, depending on the configuration, faster rebuilding compared to a RAIDZ in case of drive failure. More information can be found in the official OpenZFS documentation. [2]

|

|

dRAID is intended for more than 10-15 disks in a dRAID. A RAIDZ setup should be better for a lower amount of disks in most use cases. |

|

|

The GUI requires one more disk than the minimum (i.e. dRAID1 needs 3). It expects that a spare disk is added as well. |

-

dRAID1 or dRAID: requires at least 2 disks, one can fail before data is lost

-

dRAID2: requires at least 3 disks, two can fail before data is lost

-

dRAID3: requires at least 4 disks, three can fail before data is lost

Additional information can be found on the manual page:

# man zpoolconcepts

Spares and Data

The number of spares tells the system how many disks it should keep ready in case of a disk failure. The default value is 0 spares. Without spares, rebuilding won’t get any speed benefits.

data defines the number of devices in a redundancy group. The default value is 8. Except when disks - parity - spares equal something less than 8, the lower number is used. In general, a smaller number of data devices leads to higher IOPS, better compression ratios and faster resilvering, but defining fewer data devices reduces the available storage capacity of the pool.

Bootloader

Proxmox VE uses proxmox-boot-tool to manage the bootloader configuration. See the chapter on Proxmox VE host bootloaders for details.

ZFS Administration

This section gives you some usage examples for common tasks. ZFS itself is really powerful and provides many options. The main commands to manage ZFS are zfs and zpool. Both commands come with great manual pages, which can be read with:

# man zpool # man zfs

Create a new zpool

To create a new pool, at least one disk is needed. The ashift should have the same sector-size (2 power of ashift) or larger as the underlying disk.

# zpool create -f -o ashift=12 <pool> <device>

|

|

Pool names must adhere to the following rules:

|

To activate compression (see section Compression in ZFS):

# zfs set compression=lz4 <pool>

Create a new pool with RAID-0

Minimum 1 disk

# zpool create -f -o ashift=12 <pool> <device1> <device2>

Create a new pool with RAID-1

Minimum 2 disks

# zpool create -f -o ashift=12 <pool> mirror <device1> <device2>

Create a new pool with RAID-10

Minimum 4 disks

# zpool create -f -o ashift=12 <pool> mirror <device1> <device2> mirror <device3> <device4>

Create a new pool with RAIDZ-1

Minimum 3 disks

# zpool create -f -o ashift=12 <pool> raidz1 <device1> <device2> <device3>

Create a new pool with RAIDZ-2

Minimum 4 disks

# zpool create -f -o ashift=12 <pool> raidz2 <device1> <device2> <device3> <device4>

Please read the section for ZFS RAID Level Considerations to get a rough estimate on how IOPS and bandwidth expectations before setting up a pool, especially when wanting to use a RAID-Z mode.

Extend RAIDZ-N

|

|

This feature only works starting with Proxmox VE 9 (ZFS 2.3.3). |

Assuming you have an existing <pool> with a RAIDZ-N <raidzN-M> vdev, you can add a new physical disk <device> using the following syntax:

zpool attach <pool> <raidzN-M> <device>

You can verify general success by running zpool status <pool> and get verbose output for all attached disks by running zpool list <pool> -v. To inspect the new capacity of your pool run zfs list <pool>.

Create a new pool with cache (L2ARC)

It is possible to use a dedicated device, or partition, as second-level cache to increase the performance. Such a cache device will especially help with random-read workloads of data that is mostly static. As it acts as additional caching layer between the actual storage, and the in-memory ARC, it can also help if the ARC must be reduced due to memory constraints.

# zpool create -f -o ashift=12 <pool> <device> cache <cache-device>

Here only a single <device> and a single <cache-device> was used, but it is possible to use more devices, like it’s shown in Create a new pool with RAID.

Note that for cache devices no mirror or raid modi exist, they are all simply accumulated.

If any cache device produces errors on read, ZFS will transparently divert that request to the underlying storage layer.

Create a new pool with log (ZIL)

It is possible to use a dedicated drive, or partition, for the ZFS Intent Log (ZIL), it is mainly used to provide safe synchronous transactions, so often in performance critical paths like databases, or other programs that issue fsync operations more frequently.

The pool is used as default ZIL location, diverting the ZIL IO load to a separate device can, help to reduce transaction latencies while relieving the main pool at the same time, increasing overall performance.

For disks to be used as log devices, directly or through a partition, it’s recommend to:

-

use fast SSDs with power-loss protection, as those have much smaller commit latencies.

-

Use at least a few GB for the partition (or whole device), but using more than half of your installed memory won’t provide you with any real advantage.

# zpool create -f -o ashift=12 <pool> <device> log <log-device>

In the example above, a single <device> and a single <log-device> is used, but you can also combine this with other RAID variants, as described in the Create a new pool with RAID section.

You can also mirror the log device to multiple devices, this is mainly useful to ensure that performance doesn’t immediately degrades if a single log device fails.

If all log devices fail the ZFS main pool itself will be used again, until the log device(s) get replaced.

Add cache and log to an existing pool

If you have a pool without cache and log you can still add both, or just one of them, at any time.

For example, let’s assume you got a good enterprise SSD with power-loss protection that you want to use for improving the overall performance of your pool.

As the maximum size of a log device should be about half the size of the installed physical memory, it means that the ZIL will most likely only take up a relatively small part of the SSD, the remaining space can be used as cache.

First you have to create two GPT partitions on the SSD with parted or gdisk.

Then you’re ready to add them to a pool:

# zpool add -f <pool> log <device-part1> cache <device-part2>

Just replace <pool>, <device-part1> and <device-part2> with the pool name and the two /dev/disk/by-id/ paths to the partitions.

You can also add ZIL and cache separately.

# zpool add <pool> log <log-device>

Changing a failed device

# zpool replace -f <pool> <old-device> <new-device>

Depending on how Proxmox VE was installed it is either using systemd-boot or GRUB through proxmox-boot-tool [3] or plain GRUB as bootloader (see Host Bootloader). You can check by running:

# proxmox-boot-tool status

The first steps of copying the partition table, reissuing GUIDs and replacing the ZFS partition are the same. To make the system bootable from the new disk, different steps are needed which depend on the bootloader in use.

# sgdisk <healthy bootable device> -R <new device> # sgdisk -G <new device> # zpool replace -f <pool> <old zfs partition> <new zfs partition>

|

|

Use the zpool status -v command to monitor how far the resilvering process of the new disk has progressed. |

# proxmox-boot-tool format <new disk's ESP> # proxmox-boot-tool init <new disk's ESP> [grub]

|

|

ESP stands for EFI System Partition, which is set up as partition #2 on bootable disks when using the Proxmox VE installer since version 5.4. For details, see Setting up a new partition for use as synced ESP. |

|

|

Make sure to pass grub as mode to proxmox-boot-tool init if proxmox-boot-tool status indicates your current disks are using GRUB, especially if Secure Boot is enabled! |

# grub-install <new disk>

|

|

Plain GRUB is only used on systems installed with Proxmox VE 6.3 or earlier, which have not been manually migrated to use proxmox-boot-tool yet. |

Configure E-Mail Notification

ZFS comes with an event daemon ZED, which monitors events generated by the ZFS kernel module. The daemon can also send emails on ZFS events like pool errors. Newer ZFS packages ship the daemon in a separate zfs-zed package, which should already be installed by default in Proxmox VE.

You can configure the daemon via the file /etc/zfs/zed.d/zed.rc with your favorite editor. The required setting for email notification is ZED_EMAIL_ADDR, which is set to root by default.

ZED_EMAIL_ADDR="root"

Please note Proxmox VE forwards mails to root to the email address configured for the root user.

Limit ZFS Memory Usage

ZFS uses 50 % of the host memory for the Adaptive Replacement Cache (ARC) by default. For new installations starting with Proxmox VE 8.1, the ARC usage limit will be set to 10 % of the installed physical memory, clamped to a maximum of 16 GiB. This value is written to /etc/modprobe.d/zfs.conf.

Allocating enough memory for the ARC is crucial for IO performance, so reduce it with caution. As a general rule of thumb, allocate at least 2 GiB Base + 1 GiB/TiB-Storage. For example, if you have a pool with 8 TiB of available storage space then you should use 10 GiB of memory for the ARC.

ZFS also enforces a minimum value of 64 MiB.

You can change the ARC usage limit for the current boot (a reboot resets this change again) by writing to the zfs_arc_max module parameter directly:

echo "$[10 * 1024*1024*1024]" >/sys/module/zfs/parameters/zfs_arc_max

To permanently change the ARC limits, add (or change if already present) the following line to /etc/modprobe.d/zfs.conf:

options zfs zfs_arc_max=8589934592

This example setting limits the usage to 8 GiB (8 * 230).

|

|

In case your desired zfs_arc_max value is lower than or equal to zfs_arc_min (which defaults to 1/32 of the system memory), zfs_arc_max will be ignored unless you also set zfs_arc_min to at most zfs_arc_max - 1. |

echo "$[8 * 1024*1024*1024 - 1]" >/sys/module/zfs/parameters/zfs_arc_min echo "$[8 * 1024*1024*1024]" >/sys/module/zfs/parameters/zfs_arc_max

This example setting (temporarily) limits the usage to 8 GiB (8 * 230) on systems with more than 256 GiB of total memory, where simply setting zfs_arc_max alone would not work.

|

|

If your root file system is ZFS, you must update your initramfs every time this value changes: # update-initramfs -u -k all You must reboot to activate these changes. |

SWAP on ZFS

Swap-space created on a zvol may generate some troubles, like blocking the server or generating a high IO load, often seen when starting a Backup to an external Storage.

We strongly recommend to use enough memory, so that you normally do not run into low memory situations. Should you need or want to add swap, it is preferred to create a partition on a physical disk and use it as a swap device. You can leave some space free for this purpose in the advanced options of the installer. Additionally, you can lower the “swappiness” value. A good value for servers is 10:

# sysctl -w vm.swappiness=10

To make the swappiness persistent, open /etc/sysctl.conf with an editor of your choice and add the following line:

vm.swappiness = 10

| Value | Strategy |

|---|---|

vm.swappiness = 0 |

The kernel will swap only to avoid an out of memory condition |

vm.swappiness = 1 |

Minimum amount of swapping without disabling it entirely. |

vm.swappiness = 10 |

This value is sometimes recommended to improve performance when sufficient memory exists in a system. |

vm.swappiness = 60 |

The default value. |

vm.swappiness = 100 |

The kernel will swap aggressively. |

Encrypted ZFS Datasets

|

|

Native ZFS encryption in Proxmox VE is experimental. Known limitations and issues include Replication with encrypted datasets [4], as well as checksum errors when using Snapshots or ZVOLs. [5] |

ZFS on Linux version 0.8.0 introduced support for native encryption of datasets. After an upgrade from previous ZFS on Linux versions, the encryption feature can be enabled per pool:

# zpool get feature@encryption tank NAME PROPERTY VALUE SOURCE tank feature@encryption disabled local # zpool set feature@encryption=enabled # zpool get feature@encryption tank NAME PROPERTY VALUE SOURCE tank feature@encryption enabled local

|

|

There is currently no support for booting from pools with encrypted datasets using GRUB, and only limited support for automatically unlocking encrypted datasets on boot. Older versions of ZFS without encryption support will not be able to decrypt stored data. |

|

|

It is recommended to either unlock storage datasets manually after booting, or to write a custom unit to pass the key material needed for unlocking on boot to zfs load-key. |

|

|

Establish and test a backup procedure before enabling encryption of production data. If the associated key material/passphrase/keyfile has been lost, accessing the encrypted data is no longer possible. |

Encryption needs to be setup when creating datasets/zvols, and is inherited by default to child datasets. For example, to create an encrypted dataset tank/encrypted_data and configure it as storage in Proxmox VE, run the following commands:

# zfs create -o encryption=on -o keyformat=passphrase tank/encrypted_data Enter passphrase: Re-enter passphrase: # pvesm add zfspool encrypted_zfs -pool tank/encrypted_data

All guest volumes/disks create on this storage will be encrypted with the shared key material of the parent dataset.

To actually use the storage, the associated key material needs to be loaded and the dataset needs to be mounted. This can be done in one step with:

# zfs mount -l tank/encrypted_data Enter passphrase for 'tank/encrypted_data':

It is also possible to use a (random) keyfile instead of prompting for a passphrase by setting the keylocation and keyformat properties, either at creation time or with zfs change-key on existing datasets:

# dd if=/dev/urandom of=/path/to/keyfile bs=32 count=1 # zfs change-key -o keyformat=raw -o keylocation=file:///path/to/keyfile tank/encrypted_data

|

|

When using a keyfile, special care needs to be taken to secure the keyfile against unauthorized access or accidental loss. Without the keyfile, it is not possible to access the plaintext data! |

A guest volume created underneath an encrypted dataset will have its encryptionroot property set accordingly. The key material only needs to be loaded once per encryptionroot to be available to all encrypted datasets underneath it.

See the encryptionroot, encryption, keylocation, keyformat and keystatus properties, the zfs load-key, zfs unload-key and zfs change-key commands and the Encryption section from man zfs for more details and advanced usage.

Compression in ZFS

When compression is enabled on a dataset, ZFS tries to compress all new blocks before writing them and decompresses them on reading. Already existing data will not be compressed retroactively.

You can enable compression with:

# zfs set compression=<algorithm> <dataset>

We recommend using the lz4 algorithm, because it adds very little CPU overhead. Other algorithms like lzjb and gzip-N, where N is an integer from 1 (fastest) to 9 (best compression ratio), are also available. Depending on the algorithm and how compressible the data is, having compression enabled can even increase I/O performance.

You can disable compression at any time with:

# zfs set compression=off <dataset>

Again, only new blocks will be affected by this change.

ZFS Special Device